Why Partner With Actian: A Strategic Alliance for Success

Now more than ever, data management, integration, and analytics are crucial components of business success for data-driven organizations. Companies seeking to innovate, scale, and make decisions with confidence need trusted vendors that can provide modern, comprehensive, and easy-to-use technologies that solve business challenges and generate new value from data.

This is where Actian’s partner program stands out. It offers opportunities for you to complement your solutions with Actian products and services to meet customers’ current and future data needs. No single vendor can meet the diverse and complex data needs of today’s businesses, making strategic alliances essential to provide robust and tailored solutions to customers.

Partner With Actian and Expand Your Reach

Partnering with a technology leader like Actian is not just a strategic move—it is essential when you want to stay competitive, reach new customers, and continue adding value for current customers. Collaborating with Actian can support innovation, streamline operations, and provide access to innovative technologies that strengthen customers’ tech stacks and offer a connected ecosystem.

The Actian partner program is designed to optimize the strengths of our modern and expanding product portfolio by integrating our offerings with your technologies and services. By forming strategic partnerships with Actian, you can unlock the full potential of your data products and services while benefiting from a robust network of technologies, expert services, and solution partners.

Actian’s Unique Partner Value Proposition

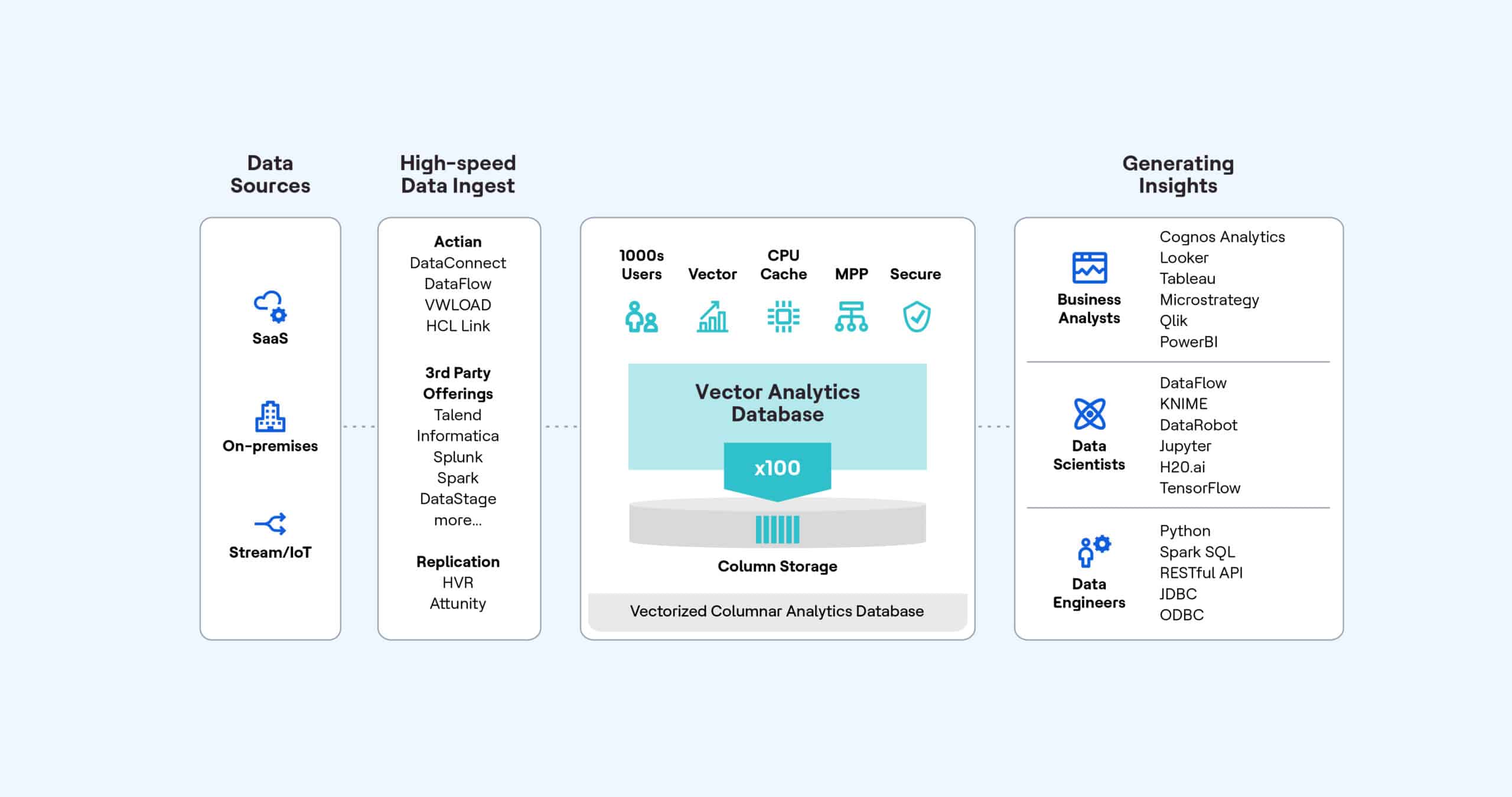

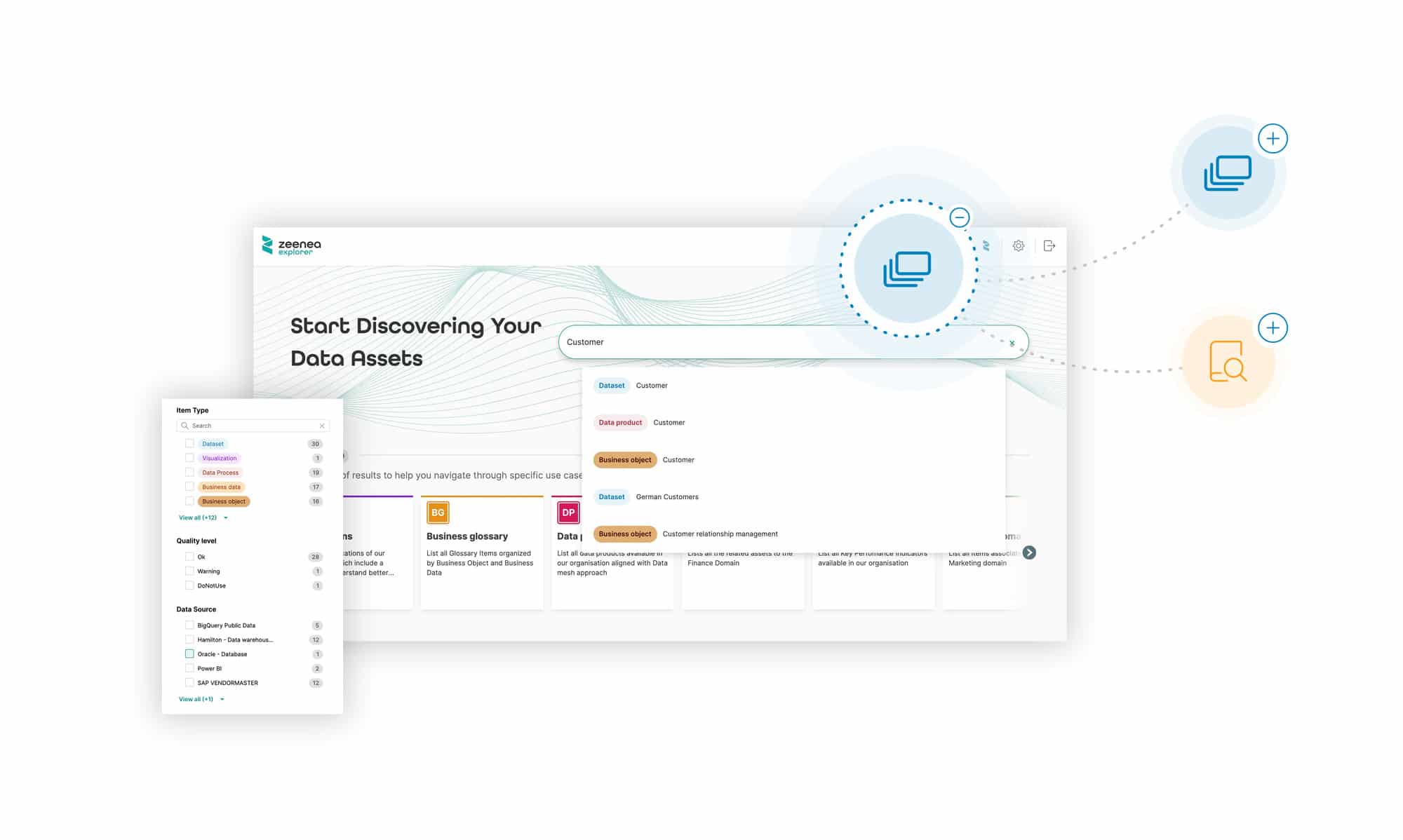

As a forward-thinking data technology company, Actian offers a modern data management and data intelligence portfolio that enables customers to easily connect, govern, and analyze their data. Our solutions enable businesses to seamlessly manage data across cloud, on-premises, and hybrid environments—and have confidence in their decision-making.

More than 42 million users around the world use our products, with over 20,000 businesses trusting our scalable technologies. When you partner with Actian, you gain several distinct advantages:

- Comprehensive Training and Support. Actian invests heavily in its partners, which is why coming soon, we’ll offer training and certification courses that ensure you are equipped to deliver the best possible solutions to customers. This investment in skills development translates into greater customer satisfaction and higher success rates for joint projects.

- Flexible Go-to-Market (GTM) Models. You can leverage a variety of GTM strategies through a partnership with Actian. Whether through co-branding, co-participation at events, or access to marketing development funds, Actian ensures that its partners are positioned for success.

- Competitive Compensation. With margin-sharing models and generous incentives, you can benefit from substantial financial benefits. These incentives are designed to promote long-term, mutually beneficial relationships, rather than focusing on short-term gains.

4 Types of Partnerships Supported by Actian

Actian’s flexibility extends to the types of partnerships it supports, ensuring that vendors with various solutions can benefit from the partner program:

- Cloud Providers. Cloud adoption continues to accelerate, with companies looking for efficient ways to migrate to and manage their data in the cloud. Actian’s rapid onboarding process and hybrid capabilities make it easy for cloud providers to offer comprehensive data management solutions to customers.

- Systems Integrators. Systems integrators can enhance their service offerings by implementing Actian’s database and data management technologies into their client projects. This drives revenue while also boosting customer satisfaction by delivering purpose-built solutions that address specific business challenges.

- ISVs/OEMs. Independent software vendors (ISVs) and original equipment manufacturers (OEMs) can embed Actian technologies into their products, offering differentiating features such as advanced data integration and analytics capabilities. This allows these businesses to stand out in their respective markets while benefiting from Actian’s extensive technologies and support.

- Technology Alliances. Actian partners with other technology leaders to deliver powerful joint solutions. By embedding Actian’s data management and analytics tools into their own technologies, tech partners can provide enhanced value to customers and gain a competitive edge.

Why You Should Partner With Actian

Partnering with Actian allows you to leverage a proven data portfolio that accelerates transformation and modernization, enabling customers to make confident, data-driven decisions that grow their business. Core reasons to partner with Actian include:

- Access to Cutting-Edge Technology. Actian’s portfolio is designed to integrate seamlessly with existing infrastructures, providing businesses with scalable, reliable, and high-performance data solutions. This enables both partners and customers to stay ahead of the curve in terms of innovation and technological advancement.

- Mutual Success Focus. Actian’s partnership philosophy is centered on long-term relationships rather than transactional engagements. We work closely with partners, aligning goals and objectives to ensure mutual success. This commitment to collaboration helps you exceed customer expectations, delivering projects that consistently outperform competitors.

- Global Reach and Expertise. Actian’s global presence, extensive customer base, and experience working with leading companies position you for success in any market. Our deep expertise—spanning more than 50 years—in data management allows you to offer industry-leading solutions to a wide range of industries and geographies.

Partner With Actian to Unlock New Opportunities

Joining Actian’s partner program is an opportunity to grow your business, enhance your technology stack offerings, and deliver value-driven solutions to customers. Our combination of industry-leading products, expert support, and a collaborative partnership model ensures that your company is well equipped to tackle the challenges your customers face with modern data management.

Whether you are a cloud provider, systems integrator, ISV, OEM, or looking to form a technology alliance, partnering with Actian will empower your business to scale and innovate. Our commitment to partners, supported by comprehensive partner benefits, makes Actian an ideal choice for vendors like yours that are seeking to advance their data capabilities offerings.

Actian’s track record of success, commitment to its partners, and industry-leading solutions make it a standout partner in today’s data-driven world. Explore the opportunities that come with joining the Actian partner program and unlock new pathways to success. Get signed up today!