Rethink Hybrid for the Data-Driven Enterprise

After a recent Actian webinar featuring Forrester Research, John Bard, senior director of product marketing at Actian, asked Forrester principal analyst Michele Goetz more about the trends in today’s enterprise data market. Here is the first part of that conversation:

John Bard, Actian: The enterprise market tends to think of “hybrid” as on-premises or cloud, but there are several other dimensions for hybrid. Can you elaborate on other ways “hybrid” applies to the data management and integration markets?

Michele Goetz, Forrester: Hybrid architecture is really about spanning a number of dimensions: deployment, data types, access, and owner ecosystem. Analysts and data consumers can’t be hindered by technology and platform constraints that limit the reach into needed information to drive strategy, decisions, and actions to be competitive in fast-paced business environments. Information architects are required to think about aligning to data service levels and expectations, forcing them to make hybrid architecture decisions about cloud, operation, and analytic workloads; self-service and security; and where information sits internally or with trusted partners.

JB: What factors do you think are important to customers evaluating databases when it comes to satisfying both transactional and analytic workloads?

MG: Traditional approaches to database selection fell into either operational or analytic. Database environments were designed for one or the other. Today, operational and analytic workloads converge as transactional and log events are analyzed in streams and intelligently drive capabilities such as robotic process automation, just-in-time maintenance, and next-best-action or advise workers in their activities. Databases need the ability to run Lambda architectures and manage workloads across historical and stream data in a manner that supports real-time actions.

JB: What are some of the market forces driving these other aspects of “hybrid” in data management?

MG: Hybrid offers companies the ability to build adaptive composable systems that are flexible to changing business demands for data and insight. New data marts can spin up and be retired at will, allowing organizations to reduce legacy marts and conflicting data silos. Hybrid data management provides a platform where services can be built on top using APIs and connectors to connect any application. Cloud helps lower the total cost of ownership as new capabilities are spun up, at the same time management layers are allowing administrators the ability to easily shift workloads and data between cloud and on-premises to further optimize cost. Additionally, data service levels are better met by hybrid data management, as users can independently source, wrangle, and build out insights with lightweight tools for integration and analytics. In each of these examples, engineering and administration resources hours are reduced or current processes are optimized for rapid deployment and faster time-to-value for data.

JB: What about hybrid data integration? That can span both data integration and application integration. What about business-to-business (B2B) integration? What about integration specialists versus “citizen integrators”?

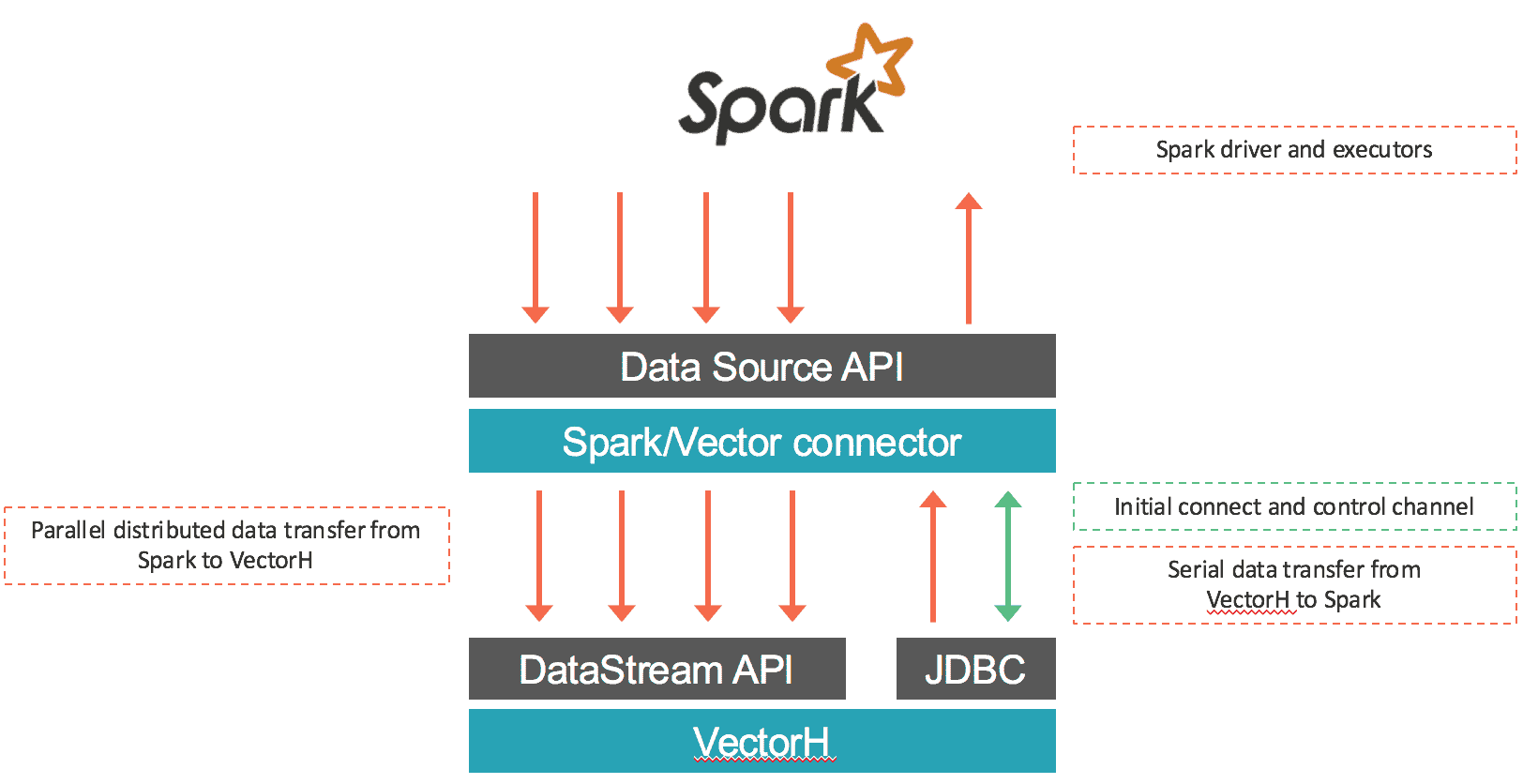

MG: Hybrid integration is defined by service-oriented data that spans data integration and application integration capabilities. Rather than relying strictly on extract, transform, and load (ETL)/extract, load, and transform (ELT) and change data capture, integration specialists have more integration tools in their toolbox to design data services based on service-level requirements. Streams allow integration to happen in real time with embedded analytics. Virtualization lets data views come into applications and business services without the burden of mass data movement. Open source ingestion provides support for a wider variety of data types and formats to take advantage of all data. APIs containerize data with application requirements and connectivity for event-driven views and insight. Data becomes tailored to business needs.

The other wave in data integration is the emergence of self-service, or the citizen integrator. With little more than an understanding of data and how to manipulate data in Excel with simple formulas, people with less technical skills can leverage and reuse APIs to get access to data or use catalog and data preparation tools to wrangle data and create data sets and APIs for data sharing. Data administrators and engineers still have visibility into and control over citizen integrator content, but they are able to focus on complex solutions and open up the bottlenecks to data that users experienced in the past.

Overall, these two trends extend flexibility, allow deployments to scale, and get to data value faster.

Hybrid data management and integration is the next-generation strategy for enterprises to go from data rich to data driven. As companies retool their businesses for digital, the internet of things (IoT), and new competitive threats, the ability to have architectures that are flexible and adapt and scale for real-time data demands will be critical to keep up with the pace of business change. Ultimately, companies will be defined and valued by the market according to their ability to harness data to stay ahead and viable.