Is Your Integration Platform Ready for Emerging Technology Trends?

Some exciting technology trends are emerging that are projected to hit the mainstream over the next few years and will have a significant impact on your data management systems. Will your integration platform be ready to support these new trends? If not, now is the time to act so you will be prepared to support a new wave of business capabilities your company will need to succeed.

Emerging Technology Trends That Are Poised to Disrupt the Status Quo

The IT industry is changing rapidly, and there are 4 key emerging technology trends that data management and IT professionals should be monitoring closely.

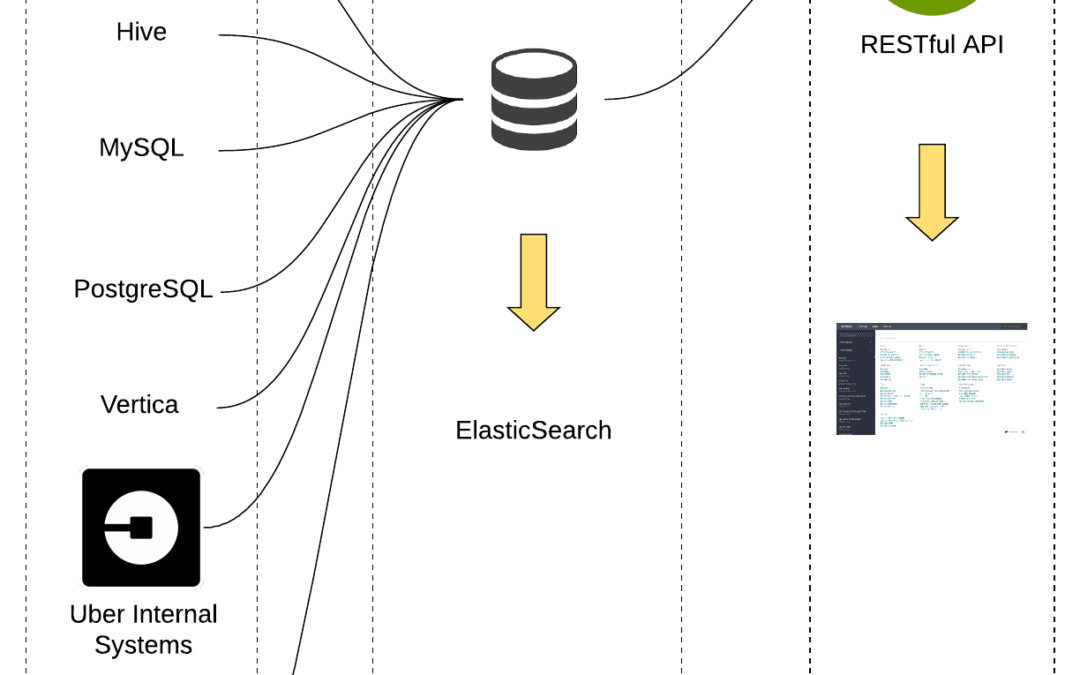

- Cloud-Native Architectures – Companies are rapidly shifting from home-grown systems to cloud services, both platforms and SaaS. These cloud services leverage cloud-native architectures that are often highly distributed, leverage parallel processing, involve non-relational data models, and can be spun up or shut down in a matter of seconds. Integrating data from these systems can be challenging for legacy data integration systems that require manual configuration of each data connection.

Your integration platform needs to be able to recognize and adapt to these cloud-native architectures and enable your business and IT teams to make frequent changes to the application landscape while maintaining the integrity and security of underlying enterprise data assets.

- Event-Driven Applications – Traditional IT applications were built around structured workflows that were well-defined, much like a novel. Modern “event-driven” applications are more like a “choose your own adventure” book, where the end-to-end transaction flow may not be pre-defined at all. Events and data are evaluated, leading to dynamic workflows emerging based on the needs of the individual transaction. Many cloud-based container apps and functions are being used to deploy capabilities this way.

The challenge event-driven applications pose to data management is that they lack the data context that traditional application workflows provide. Context is derived from the series of events and actions that led to the current point in time. Your integration platform will need to understand and be able to support the unique nuances of these event-driven applications and contextualize the data they produce differently.

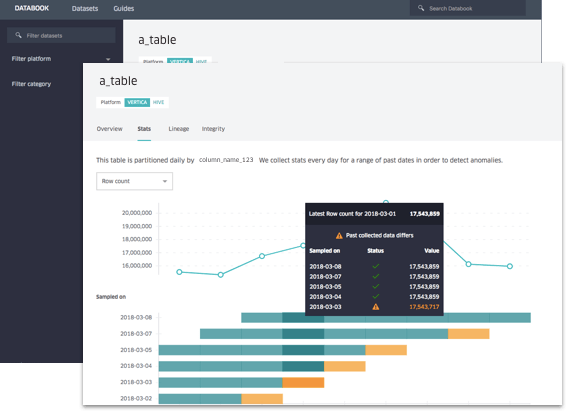

- API-Led Integration – Similar to event-driven applications, API-led integration is a new model for bringing IT capabilities together. Applications are treated as pseudo-black boxes, and what is managed in a structured way is the interfaces between them. From a data management perspective, this raises the need to manage data in motion (traveling between apps over APIs) as well as data at rest (within individual applications). Your integration platform will need to understand the differences between these two types of data and be able to ingest, transform, and load them together in your data warehouse for further processing.

- Streaming Data – Companies in all industries are now being inundated with streaming data coming from a variety of data sources – IoT, Mobile apps, deployed sensors, cloud services, and digital subscriptions are a few examples. The data these systems generate is significant, and in even a small organization, the number of data sources can be extensive. When you multiply large data streams across many data sources, the streaming data volume that a company needs to manage can be massive.

Most legacy integration platforms were designed for batch data processing, not the scale challenges of streaming data. Cloud-based integration platforms are often better suited to address streaming data needs than on-premises systems because of the underlying capacity of the cloud environments where they operate.

Is Your Integration Platform Ready?

If you aren’t sure whether your integration platform is up to the task of supporting these emerging technologies, it probably isn’t. Actian DataConnect is a modern hybrid integration platform that leverages cloud-scale and performance to deliver the capabilities you need to connect anything, anytime, anywhere, and integrate it into your enterprise data landscape. To learn more, visit DataConnect.