From the Golf Course to the Boardroom: The Case for Data

Summary

- Explains why Actian partnered with PGA TOUR pro Michael Kim as a data-driven brand sponsorship.

- Highlights Kim’s analytical approach to golf as a model for enterprise data and AI discipline.

- Connects trusted data, repeatable decisions, and competitive advantage across sports and business.

- Shows how authentic alignment builds brand trust beyond traditional B2B marketing.

- Positions the partnership as a shared commitment to data intelligence and performance.

A while back, a colleague told me and others in our company something we never forgot: “PGA Tour professional golfer Phil Mickelson closes more deals for a software vendor than any of its salespeople.” I can’t personally verify the exact math, but I can tell you why the story sticks. It captures the real value of a great sponsorship.

Done right, a sponsorship is not about celebrity for celebrity’s sake. It’s about trust, relevance, and repeated moments where your brand shows up, and shows up credibly, in places your audience already cares about.

That’s exactly why Actian is partnering with PGA TOUR professional Michael Kim. We announced our multi-year sponsorship with Kim in December 2025. You’ll see our Actian logo on Kim’s apparel across PGA TOUR events, and that’s only the beginning.

Kim is the right fit for Actian because his approach to golf is a perfect lens for what modern enterprises are trying to do with data and AI. They are all taking a data-driven approach to find ways to improve processes and achieve excellence.

Why Michael Kim’s Approach is Different From His Peers

Every elite athlete uses data now. The difference is how openly and how rigorously they use it.

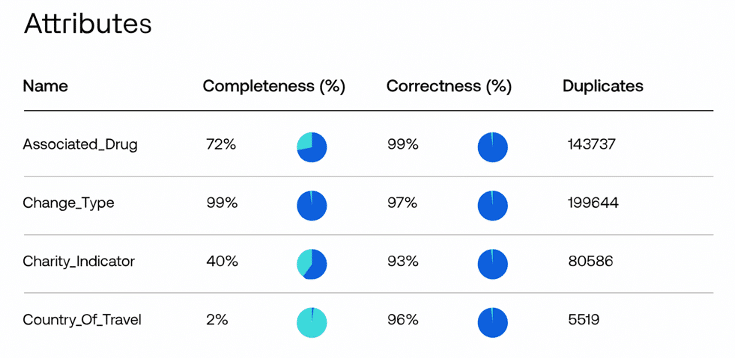

Kim has built a reputation as one of pro golf’s most analytically transparent players. He doesn’t just reference data and metrics in passing. He breaks down the why behind his performance, such as strokes gained, ball speed, dispersion patterns, course fit, and the small adjustments that impact his game over time.

What interests me about Kim’s use of data is that he treats it as an operating system, not a highlight reel. Data doesn’t only tell the story about past performances. It’s the foundation for making better decisions every day, while under pressure. That mindset is what we’re enabling at Actian for our enterprise customers.

Golf is a Masterclass in Data for Competitive Advantage

Think about all that happens before a single golf swing. There’s wind, lie, turf conditions, pin placement, risk tolerance, and confidence in club selection that impact the game, plus the player’s own patterns across thousands of shots.

Kim told us he uses data analytics to break down where he’s strong, like certain approach distances, and where he needs to improve. He can then build a plan for his particular game using trusted insights.

He uses ShotLink tracking data, looks at dispersion rates, and compares club performance for different shots. In addition, he works with a data scientist as part of his extended professional team that includes his caddie, coach, trainers, and the rest of his golfing ecosystem.

That’s not just being data-driven. That’s being data-disciplined.

This is the business equivalent of what leaders are asking of their organizations right now. The ask is to stop thinking they know how the business is performing without validating it with data, and to stop stitching together spreadsheets and dashboards that don’t tell a complete story. Instead, leaders need to start making decisions they can explain, defend, and repeat, especially as AI becomes embedded in everyday workflows.

Kim summed up the philosophy in our news announcement. He needs certainty and confidence that his analytics are built on reliable, trustworthy data, and he sees Actian as sharing that core belief.

Our Sponsorship Story and Why it Makes Me Smile

Like a lot of partnerships, the one between Actian and Kim didn’t start with a perfect spreadsheet.

We explored options, looking for someone who is not only established with name recognition but also actually uses data. The reality of top-tier sponsorships is that there are only so many “logo slots” on a hat, bag, collar, sleeve, and chest of an athlete. Typically, the biggest names often already have full cards and very high price tags.

Then my husband, who heard me talking about the sponsorship with a co-worker, told me simply, “You should work with Michael Kim.”

My husband follows Kim on social media and kept telling me, “This guy is very data-driven, and he’s honest talking about it.” When I got into meetings with Actian management and started reviewing options, Kim’s name kept rising to the top, not just because he’s a winning golfer, but because the narrative fit is effortless.

I then discovered a personal connection that made the partnership even better. Kim grew up in San Diego and went to Torrey Pines High School—the same school one of my kids graduated from, and another is now attending.

When Kim and I finally met in person, it was one of those moments where I said, “Okay, this partnership is not a coincidence.”

Brand Recognition That Delivers Value

Let’s be honest. Awareness is part of the goal of our partnership. In that area, Kim is already delivering.

He’s active on X, engages with fans, and enjoys talking data. When he announced our sponsorship, the post generated tens of thousands of views. This is exposure we simply don’t get from a typical B2B campaign, no matter how good the copy and design are. That’s tens of thousands of people who may not know Actian yet, but now associate our brand with performance, discipline, and smart use of data.

Yet what matters is that the attention is earned because the partnership makes sense. As our press release notes, the collaboration connects one of golf’s most analytical players with a leader in data intelligence.

When Kim Wins, Actian Wins

Kim has already proven he can win at the highest levels, including victories at the John Deere Classic and the 2025 FedEx French Open. That’s the simple magic of a partnership like this: when he’s in contention, our logo is there. When his story gets told, the story is inherently about data, precision, and trust. When he talks about the numbers, people connect the dots to Actian.

That’s not a stretch. That’s alignment.

At Actian, we help organizations discover, trust, and activate data with the governance, context, and quality businesses require. Kim shows what it looks like when that discipline becomes a competitive advantage.

In 2026 and beyond, we plan to keep governing the game with a partner who truly plays it that way. Find out more about how Kim and Actian win with data.