How to Triage Data Incidents

Summary

- Effective data triage involves categorizing incidents based on their severity and impact on downstream business users.

- Data observability tools speed up triage by identifying whether an issue originates in the code, the infrastructure, or the data itself.

- Automated lineage helps teams quickly assess which datasets and reports are affected by a specific failure.

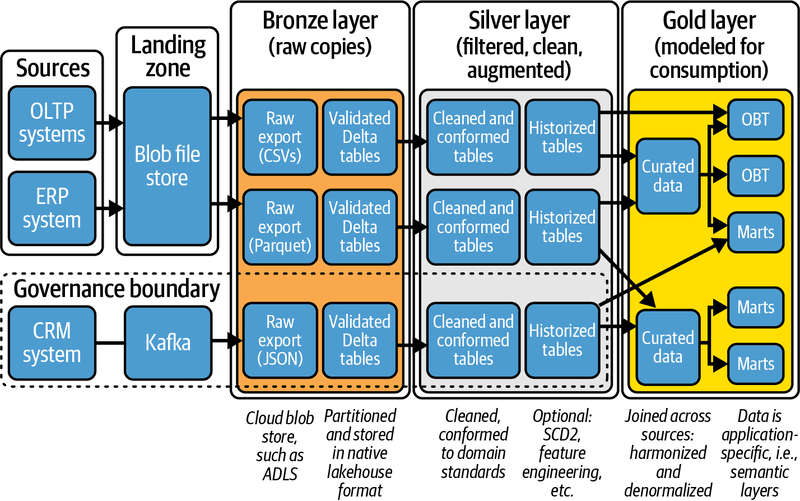

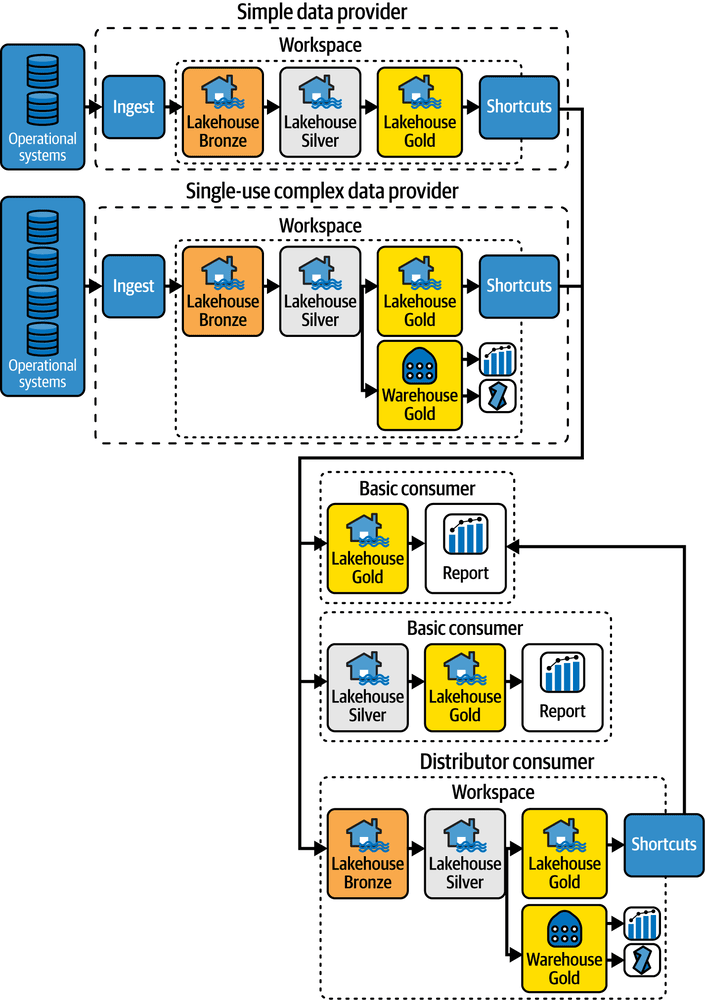

- Prioritizing high-impact “gold” datasets during triage ensures that critical business operations are restored first.

A single data incident can lead to broken dashboards, inaccurate analyses, or flawed decisions, which in turn can critically endanger an organization’s ability to thrive. Whether caused by schema changes, integration failures, or human error, data incidents must be addressed quickly and effectively.

Triage is the process of assessing and prioritizing incidents based on severity and impact, and it is a crucial first step in managing data quality disruptions. This article outlines a systematic approach to triaging data incidents and introduces tools and best practices to ensure an organization’s data systems remain reliable and resilient.

Understanding Data Incidents

Data incidents are events that disrupt the normal flow, quality, or accessibility of data. These can range from missing or corrupted records to delayed data ingestion or faulty transformations. Left unresolved, such issues compromise downstream processes, analytics, machine learning models, and ultimately, business decisions.

Common Causes of Data Incidents

Data incidents often stem from a variety of sources, including:

- ETL/ELT Pipeline Failures: Issues in data extraction or transformation logic can lead to incomplete or inaccurate data.

- Source System Changes: Schema modifications or API updates are often the cause of integration pipeline disruptions.

- Human Error: Manual data entry problems, configuration mistakes, or miscommunication can lead to inconsistent datasets.

- Infrastructure Issues: Network failures, database outages, or storage constraints can cause delays or data corruption.

- Software Bugs or Logic Flaws: Flawed code in data processing scripts can propagate incorrect data silently.

Recognizing these root causes helps organizations prepare for and respond to incidents more effectively.

Types of Data Quality Issues

Data quality issues manifest in multiple ways, including:

- Missing Data: Entire rows or fields are absent.

- Duplicate Entries: Redundant records inflate data volumes and distort results.

- Outliers or Anomalies: Values that deviate significantly from expected norms.

- Schema Drift: Untracked changes to table structure or data types.

- Delayed Arrival: Latency in ingestion affects freshness and timeliness.

Early detection of these signals (through monitoring tools, data validation checks, and user reports) enables faster triage and resolution.

The Importance of Data Triage

Just as medical teams prioritize patients based on urgency, data teams must evaluate incidents to allocate resources efficiently. Data triage ensures that the most business-critical problems receive immediate attention.

Minimizing Business Impact

Without proper triage, teams may spend time addressing low-priority issues while severe ones remain unattended. For instance, an unnoticed delay in customer order data could result in shipment errors or poor customer service. Triage helps focus efforts where they matter most, reducing downtime and avoiding reputational damage.

Enhancing Data Reliability

Triage lays the groundwork for a resilient data ecosystem. By classifying and tracking incident types and frequencies, organizations can uncover systemic weaknesses and build more fault-tolerant pipelines. Over time, this leads to more accurate analytics, dependable reporting, and greater trust in data.

Steps to Triage Data Incidents

Triage is not a single action but a structured workflow. Here’s a simplified three-step process:

Step 1: Detection and Logging

The process starts with detecting a data incident. This can happen through automated alerts, dashboard anomalies, or stakeholder reports. Once detected, organizations should take the following actions.

- Log the incident with key metadata: time, source, data domain, and symptoms.

- Categorize by severity: High (e.g., customer data breach), Medium (delayed reporting), Low (minor formatting errors).

- Notify the relevant stakeholders: data engineers, analysts, or data stewards.

Accurate logging helps build a knowledge base of incidents and their solutions, speeding up future investigations.

Step 2: Impact Assessment and Prioritization

Next, determine the business impact of the incident:

- What systems or teams are affected?

- Is the issue recurring or isolated?

- Are critical KPIs or SLAs at risk?

Prioritize incidents based on urgency and scope. For example, an incident affecting real-time fraud detection should take precedence over a broken weekly email report. This step often involves a preliminary root cause analysis to determine whether the incident is caused by a transformation error, integration failure, or an issue with the external data source.

Step 3: Containment and Escalation

Once prioritized, initiate containment to prevent further spread. This might involve halting data processing, isolating affected pipelines, or reverting to backup datasets. If the issue is complex or spans multiple teams, escalate to senior engineers or incident response teams. Communication is key. Provide regular updates to stakeholders until the incident has been resolved.

After containment, document the information learned and update processes to prevent similar data issues from occurring.

Implementing Effective Data Management Solutions

A strong data management foundation streamlines triage and reduces the frequency of incidents.

Leveraging Automation Tools

Manual incident detection is inefficient and prone to delays. Modern observability platforms like the Actian Data Intelligence Platform, Monte Carlo, Bigeye, or open-source tools like Great Expectations can:

- Monitor pipelines and data quality in real time.

- Detect anomalies automatically.

- Generate alerts and route them to the appropriate teams.

Automation shortens detection time and ensures consistent handling across incidents.

Establishing Clear Data Governance Policies

Governance frameworks provide clarity on ownership, accountability, and standards. Well-defined data ownership helps answer questions like:

- Who owns this dataset?

- Who should be alerted?

- What’s the escalation path?

Data contracts, lineage tracking, and documentation also play a critical role in triage by reducing ambiguity during high-pressure situations. These steps, respectively, outline the proper procedures to follow, the transformations or alterations that occurred during the triage process, and how the incident was resolved.

Best Practices for Continuous Improvement

Beyond tools and processes, a culture of learning and adaptation enhances long-term data incident response.

Regular Training and Awareness Programs

Data teams, engineers, and dataset users alike should be trained on:

- How to detect and report incidents.

- Understanding the triage workflow, including the roles involved in creation and remediation.

- Common causes and prevention techniques.

Workshops, simulations, and post-mortems help build collective resilience and reduce dependency on a few individuals.

Continuous Monitoring and Feedback Loops

Triage is part of a larger lifecycle that includes post-incident reviews. After each incident:

- Conduct a root cause analysis (RCA).

- Update monitoring rules and alert thresholds.

- Capture metrics such as Mean Time to Detect (MTTD) and Mean Time to Resolve (MTTR).

Integrating these insights into ongoing development cycles ensures systems get smarter and more robust over time.

Protect Data With Actian’s Data Solutions

Actian offers enterprise-grade solutions to prevent, detect, and respond to data incidents with agility and precision. With its high-performance data integration, real-time analytics, and hybrid cloud capabilities, Actian helps organizations maintain clean, timely, and trustworthy data.

Key features that support triage include the following.

- Real-Time Data Validation: Catch anomalies before they impact dashboards or models.

- Data Lineage and Auditing: Trace the root causes of incidents with ease.

- Scalable Integration Tools: Handle changes in data sources without breaking pipelines.

- Hybrid Deployment Options: Maintain observability across on-prem and cloud systems.

By incorporating Actian into their data ecosystems, organizations equip teams with the tools to detect issues early, triage efficiently, and recover with confidence.