What are the Different Types of Metadata?

Dealing with large volumes of data is essential to any organization’s success. But knowing what kind of data it is, where it comes from, and how it can be used is just as important. This is the role of metadata. So, how can companies optimize and enhance it? Follow this guide.

Data is essential to have in-depth knowledge of an organization’s market, industry, customers, or products. But to exploit the full potential of this data, it is essential to focus on its metadata. This data on data is a prerequisite for knowing how to best use it. By having a precise vision of what made it possible to generate the data, at what time, and via which source, it is possible to contextualize this information. Metadata is, in a way, structured information that describes, explains, locates, or facilitates access, use, or management of an information source.

However, the role of metadata is not limited to understanding the origin of data.

Properly managed and structured, metadata will also allow organizations to know how to get the most out of the information they have, according to the objectives they’ve set.

How is Metadata Useful?

Metadata is everywhere. Not just in client files or in website archives. When taking a picture with a smartphone, metadata is instantly attached to the image: date, time, location… All this information can be valuable when wanting to create a virtual photo album, for example.

It’s the same in the context of a company’s data project.

While metadata is necessary to truly understand where data comes from and how it can be used, it is not the only thing it is used for. In fact, when properly managed, metadata is a major lever for organizations seeking to structure and enhance their information daily. Optimal metadata management is therefore the foundation of a data-driven transformation project.

The Different Types of Metadata

If the use of the generic term for metadata is to qualify the information relative to data, it is important to know that they can be classified into different types.

Thus, it is important to distinguish between descriptive metadata, which presents a resource in a way that facilitates the identification of the available data, and structural metadata. The latter provides information on the composition or organization of a data resource. To describe a data portfolio, there is also administrative metadata, which provides information on the date of creation or acquisition of the data, but also on its associated permissions, lifespan, and use.

Alongside this generic metadata is a wide range of other types of metadata. They provide context on the application and business uses of information, on technical aspects, or even reinforce an information’s descriptive dimension.

The larger the volume of data you have, the more varied the sources of data acquisition and collection are, and the more companies will benefit from fine-tuned metadata management.

What Tools Manage Metadata?

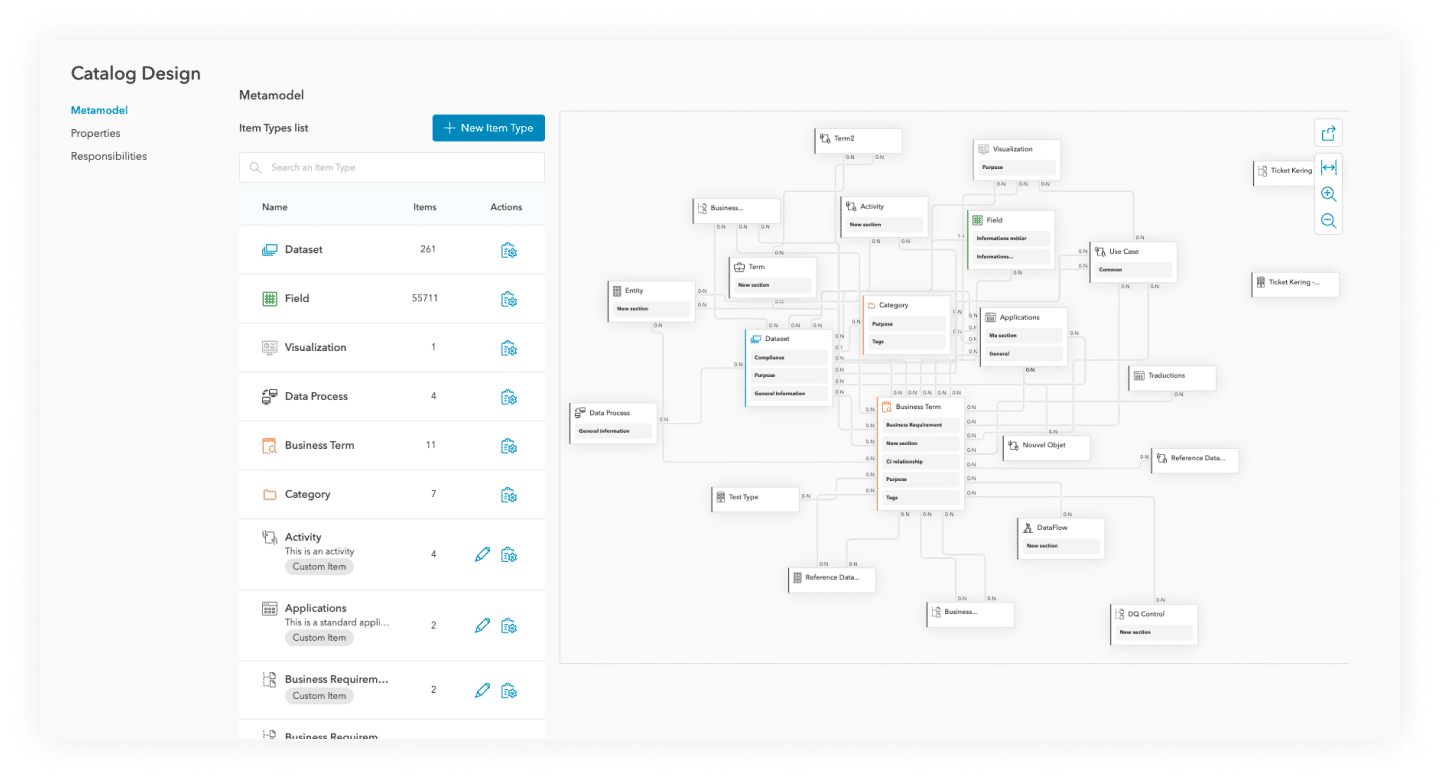

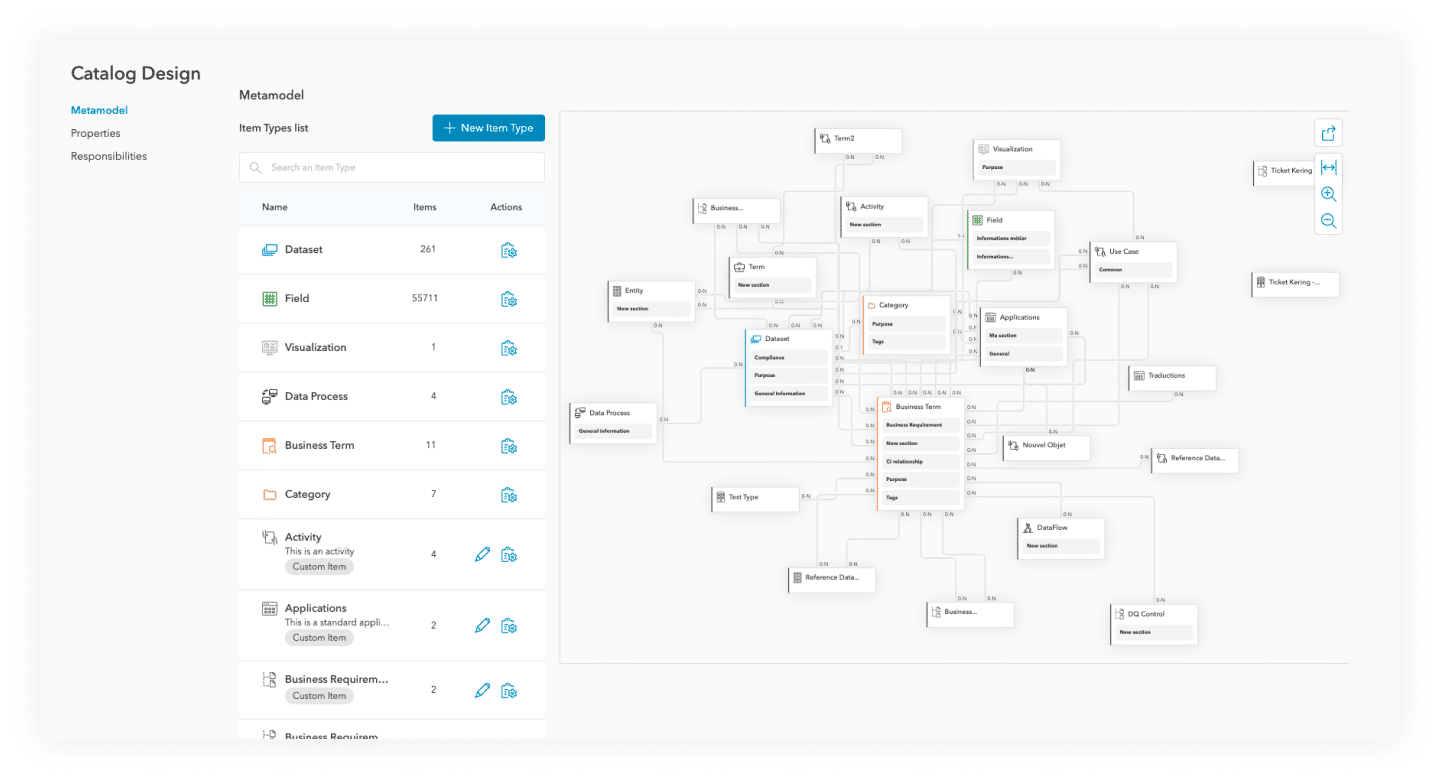

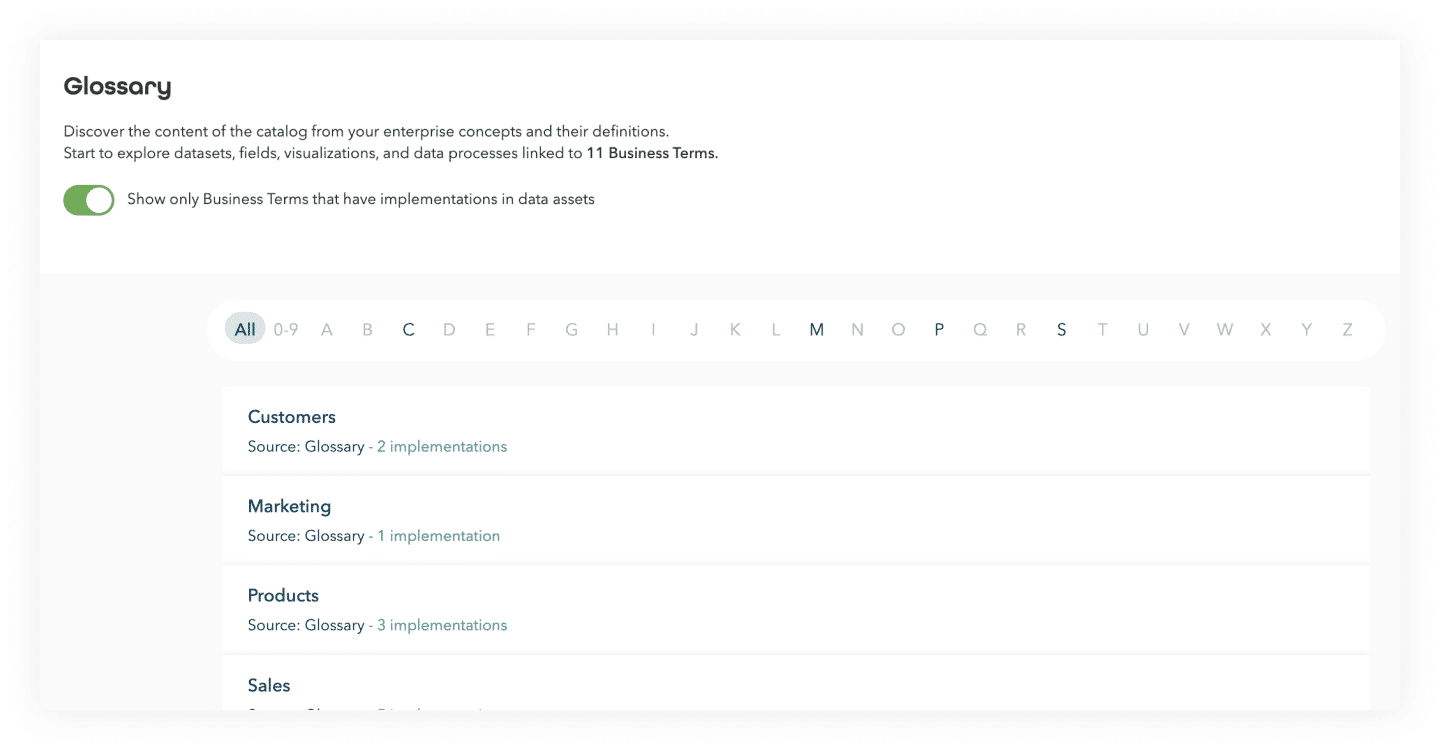

To organize and optimize metadata use for all employees, it is essential to use a Data Catalog. Through this metadata management solution, organizations are able to index their data and metadata as well as quickly identify the sources of information that are available to data teams. But a Data Catalog’s mission goes even further: it will enable companies to reference all their data assets, facilitate data access when needed, and perform thematic searches.

Indeed, the quality of this metadata conditions the quality of a data description, with a direct impact on its visibility and ease of use.

We’ve identified three types of metadata within our data catalog:

- Technical Metadata: They describe the structure of a dataset and the information related to storage systems.

- Business Metadata: It applies business context to datasets: descriptions (context and usage), owners and referents, tags, and properties in order to create a taxonomy over the datasets that will be indexed by our search engine. Business metadata are also present at the schema level of a dataset: descriptions, tags, or data confidentiality level per column.

- Operational Metadata: This allows us to understand when and how the data was created or transformed: statistical analysis of the data, date of update, origin (lineage), volume, cardinality, the identifier of the processes that created or transformed the data, status of the processes on the data, etc.