5 Essential Features for a Five-Star Data Stewardship Program

You have data – and lots of it. However, it is messy, incomplete, and scattered across several different platforms, databases, and even spreadsheets. On top of this, some of your information is inaccessible or, worse, accessible to the wrong people. And as the go-to data experts of the company, Data Stewards must be able to identify the who, what, when, where, and why of their data to build a reliable stewardship program.

Unfortunately, Data Stewards face a major roadblock to success – the lack of tools to support their role. When dealing with large volumes of data, maintaining data documentation, managing enterprise metadata, and tackling quality & governance issues can be quite challenging.

This is where the Actian Data Intelligence Platform steps in. Our data intelligence platform – and its smart and automated metadata management features – facilitates the lives of Data Stewards. Discover 5 of them in this article.

Feature 1: Universal Connectivity

Automatically extract and inventory metadata from your data sources.

As mentioned above, a lot of enterprise data is spread across many different information sources, making it difficult, even impossible, for Data Stewards to manage and control their data landscape. Actian Data Intelligence Platform provides a next-generation data cataloging solution that centralizes and unifies all enterprise metadata into a single source of truth. Our platform’s wide range of native connectors automatically retrieves and collects metadata through our APIs and scanners.

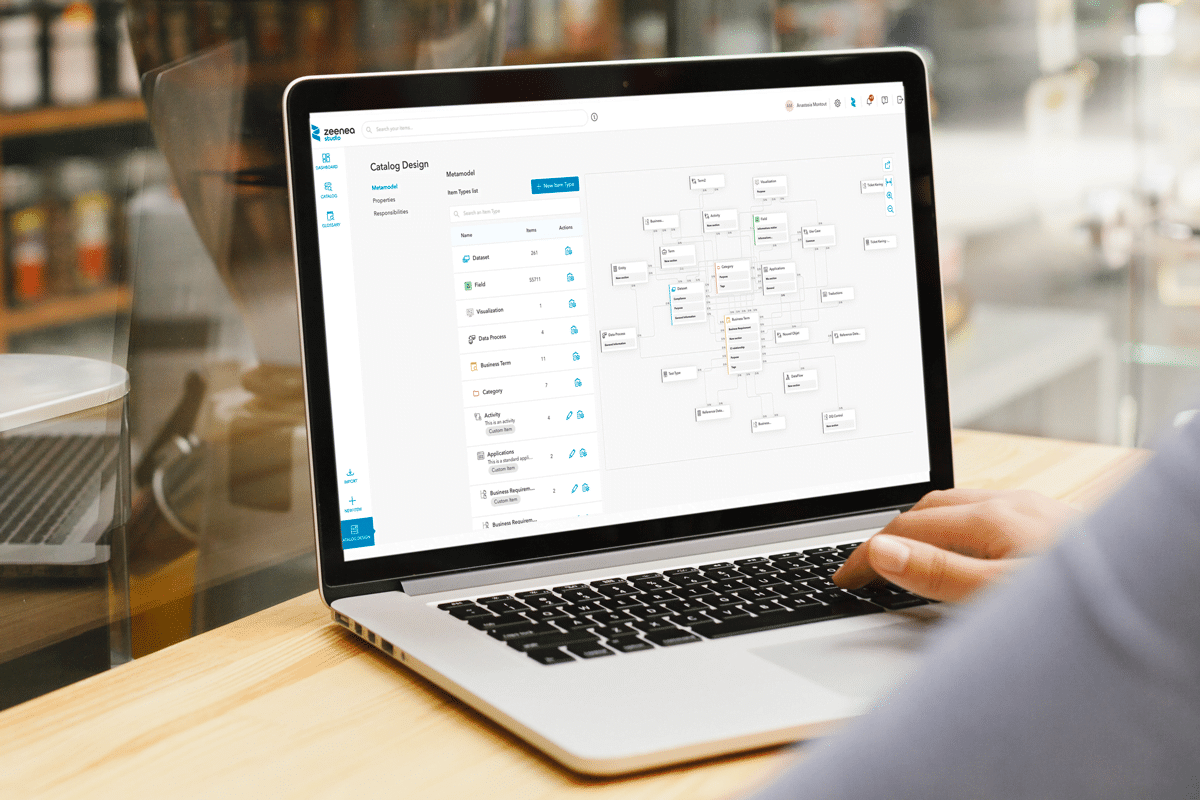

Feature 2: A Flexible & Adaptable Metamodel

Automate data documentation.

Documenting information can be extremely time-consuming, with sometimes thousands of properties, fields, and other important metadata that need to be implemented for business teams to fully understand and have the necessary context on the data they are consulting. Actian Data Intelligence Platform provides a flexible and adaptable way to build metamodel templates for pre-configured (datasets, fields, data processes, etc) and an unlimited amount of custom objects (procedures, rules, KPIs, regulations, etc).

Import or create your documentation templates by simply dragging & dropping your existing properties along with your tags, and other custom metadata into your templates. Made a mistake in your template? No problem! Add, remove, or modify your properties and sections as you please – your items are automatically updated after you’ve finished editing them.

Feature 3: Automatic Data Lineage

Trace your data transformations.

In order for Data Stewards to build accurate and trustworthy compliance reports, data lineage capabilities are essential. Many software developers offer lineage capabilities, but rare are those who understand it. Via a visual and easy-to-interpret lineage graph, the Actian Data Intelligence Platform offers your users the possibility to navigate through the lifecycle of their data. Click on any item to get an overview of its documentation, relations to other assets, as well as its metadata to obtain a 360° view of your catalog items.

Feature 4: Smart Suggestions

Quickly identify personal data.

With the GDPR, California Consumer Privacy Act, and other regulations regarding the security and privacy of the information of individuals, it can be a hassle to go through each existing set of information to ensure you’ve correctly indicated the data as personal. To always ensure your information is correctly labeled, the Actian Data Intelligence Platform analyzes similarities between existing personal data by identifying and giving suggestions on which fields to tag as “personal data”. Data Stewards can accept, ignore, or delete suggestions directly from their dashboard.

Feature 5: An Effective Permission Sets Model

Ensure the right people are accessing the right data.

For organizations with various types of users accessing their data landscape, it doesn’t make sense to give everyone full access to modify anything and everything. Especially when dealing with sensitive or personal information. For this reason, the Actian Data Intelligence Platform designed an effective permission sets model to allow Data Stewards to increase efficiency for your organization and reduce the risk of errors. Assign read-only, edition, and admin rights in all or different parts of the catalog to not only ensure a secure catalog but also save time when data consumers need to find an asset’s referent.

Ready to Start Your Data Stewardship Program?

If you’re interested in the Actian Data Intelligence Platform’s features for your data documentation & data stewardship needs, contact us for a demo with one of our data experts.