Can AI in Manufacturing Work Without the Cloud?

Zusammenfassung

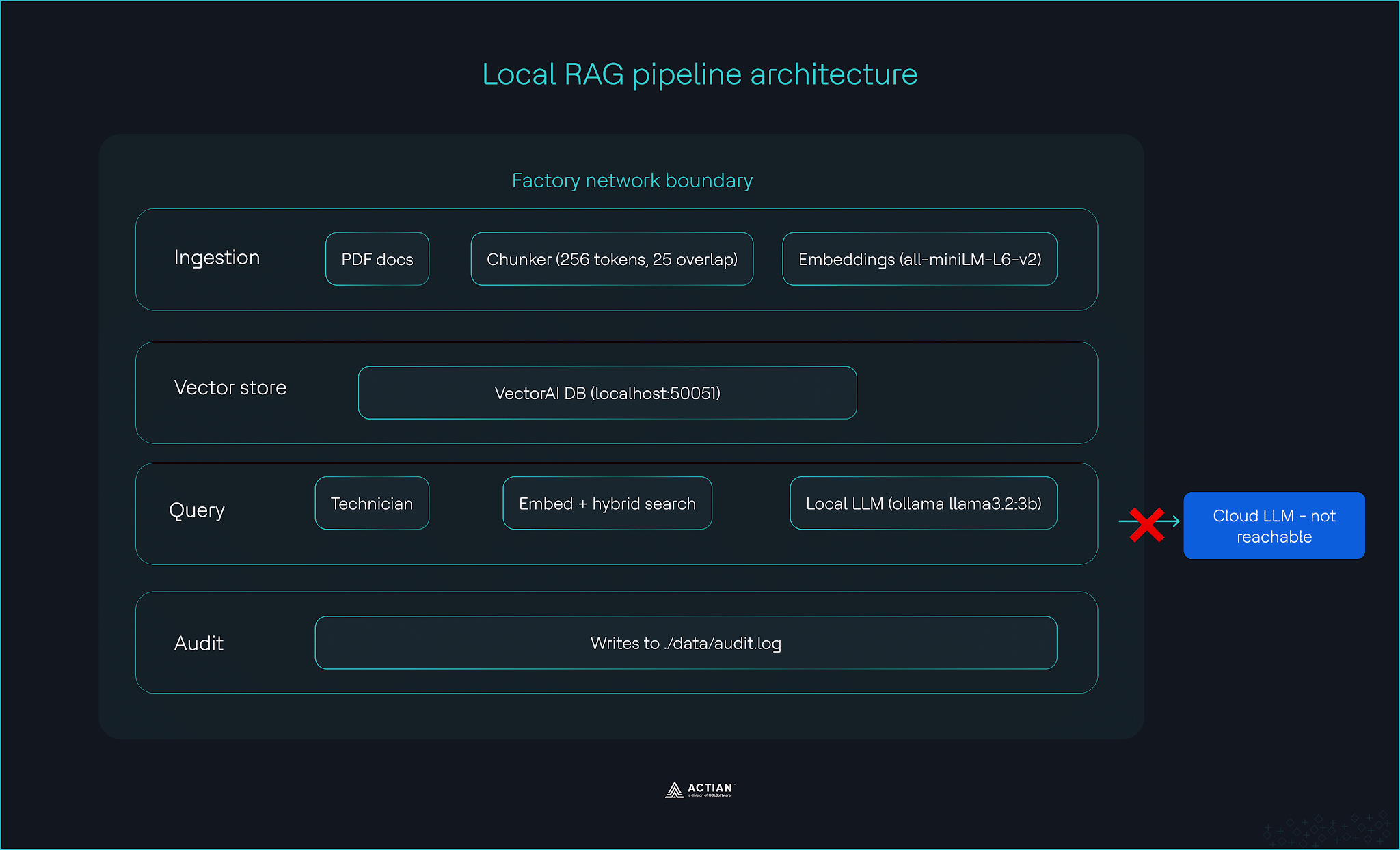

- This tutorial shows how to build a fully local RAG pipeline for manufacturing environments where cloud access is restricted by network architecture, latency needs, cost, and regulatory constraints.

- The pipeline has three layers: ingestion of PDF maintenance records into a vector database, query-time retrieval with metadata filters, and a local LLM that generates cited answers from the retrieved context.

- It runs entirely on factory-floor hardware using VectorAI DB, sentence-transformers for embeddings, and Ollama for local language model inference.

- It also includes practical features such as equipment-line and date filtering, audit logging for traceability, and local operation during outages with no internet dependency.

- The main takeaway is that AI-powered maintenance search can run securely inside operational technology environments, giving technicians fast, grounded answers from historical records without sending data outside the plant.

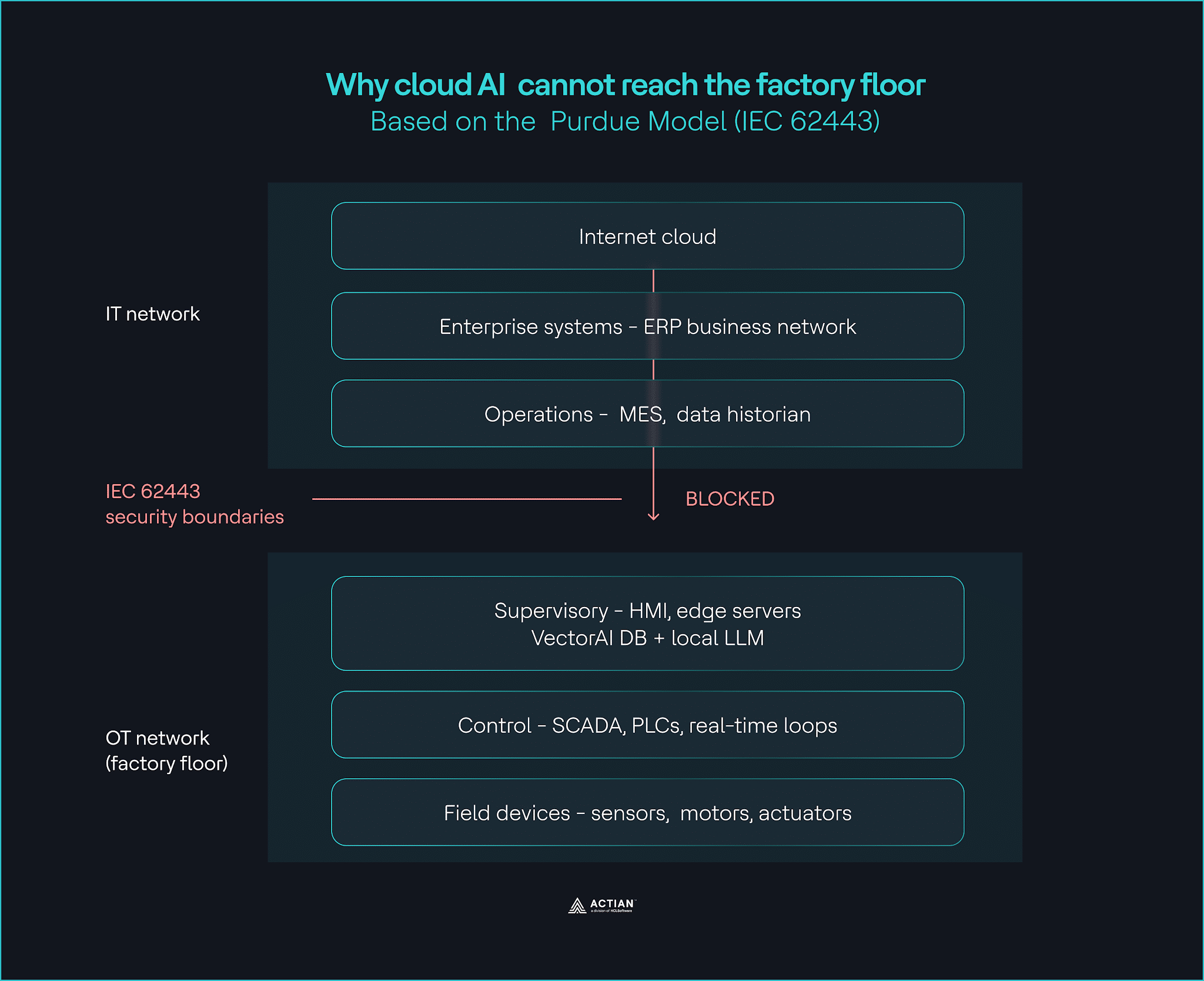

Keeping external traffic out of operational networks is a best practice that most manufacturing facilities build into their architecture from the ground up.

Manufacturing networks use the Purdue Model, a five-level system that has shaped industrial network design for decades. At the lowest level are the physical machines: sensors, motors, and actuators at Level 0; real-time controllers and SCADA systems at Level 1; and supervisory servers and HMI systems at Level 2. Level 3 manages operations. Levels 4 and 5 connect to the enterprise network and to the internet.

IEC 62443 enforces strict boundaries between these levels. Traffic from Level 2 does not reach the internet. For defense contractors, ITAR compounds the problem. Technical data must stay on U.S. soil and remain accessible only to U.S. persons. Cloud-hosted vector databases like Pinecone, Weaviate Cloud, and Qdrant Cloud fail both requirements. Level 2 has no way to send that request, and other industries learned this lesson the hard way.

Latency compounds the problem. Cloud round-trips average 50 to 500 milliseconds. PLC-level control loops require responses in under 10 milliseconds. Teams that need AI during outages use edge deployment patterns designed for disconnected environments.

Cost adds another layer. AWS standard egress starts at $0.09 per GB. At any serious production scale, sensor and vision data add up quickly, and the bill arrives faster than most teams expect.

Architecture, latency, and cost all point in the same direction. AI on the factory floor needs to run where the data lives.

This tutorial shows you how to build a local RAG pipeline that runs entirely on factory-floor hardware, where a technician can ask a question about any piece of equipment and get a cited answer from decades of maintenance records, with no internet connection required.

What You are Building

You’ll build a three-layer RAG pipeline that runs fully inside your factory network. The ingestion layer processes PDF maintenance documents and stores them in Actian VectorAI DB. The query layer takes a technician’s question and returns a cited answer fast enough for interactive use on factory-floor hardware.

- Ingestion: Reads the PDF maintenance documents, splits them into 256-token chunks with a 25-token overlap, generates embeddings using sentence-transformers on a CPU, and stores everything in VectorAI DB with metadata for equipment line, document date, and source file.

- Query: Takes a technician’s question, embeds it with the same model, runs a hybrid search in VectorAI DB filtered by equipment line and date range, and sends the top results to a local LLM running with Ollama, which generates a cited answer in plain English.

- Audit: Logs every ingestion and query event as a structured JSON entry to ./data/audit.log, timestamped in UTC, and stored inside your security boundary to satisfy IEC 62443 traceability requirements.

VectorAI DB sits at the center of all three layers. It stores the embeddings that the ingestion layer produces, and serves the search results that the query layer runs. Running it on-premises instead of in the cloud keeps the whole pipeline inside your security boundary.

The pipeline runs on standard factory edge server hardware, with Ubuntu 22.04 LTS, 16 GB of RAM, and a 4-core CPU.

Building a Local RAG Pipeline With VectorAI DB

Set up VectorAI DB, build the ingestion pipeline, run your first query, add hybrid filters, and connect a local LLM.

Voraussetzungen

Sign up for the VectorAI DB community edition before you start, then make sure you have these installed:

- Docker and Docker Compose

- Python 3.10 or higher

- uv package manager. Install with

curl -LsSf https://astral.sh/uv/install.sh | sh - Ollama. Install from Ollama.com and pull the model with

ollama pull llama3.2:3b

Your machine needs at least 8 GB RAM (16 GB or more recommended) and 10 GB of disk space (100 GB or more recommended) to run VectorAI DB. If you’re on Windows, the uv install command needs ‘sh’, which PowerShell doesn’t have. Run all commands in WSL2 (Windows Subsystem for Linux). To set up WSL2, run ‘wsl –install’ in PowerShell, then use the Ubuntu terminal for this tutorial.

Project structure

Set up your project folder like this:

factory-rag/

├── docker-compose.yml

├── data/

│ └── audit.log

├── config/

└── src/

├── healthcheck.py

├── ingest.py

├── query.py

├── llm.py

├── audit.py

└── test_e2e.pyCreate the directories:

mkdir -p factory-rag/{data,config,src}

cd factory-ragStep 1: Deploy VectorAI DB

Create docker-compose.yml in your project root:

services:

vectorai-db:

image: williamimoh/actian-vectorai-db:latest

platform: linux/amd64

container_name: vectorai-db

ports:

- "50051:50051"

volumes:

- ./data:/data

- ./config:/config

restart: unless-stopped

healthcheck:

test: ["CMD-SHELL", "nc -z localhost 50051 || exit 1"]

interval: 10s

timeout: 5s

retries: 5

start_period: 15sStart the container with:

docker compose up -dInstall the SDK with:

uv add actian_vectorai-0.1.0b2-py3-none-any.whlInstall these required libraries:

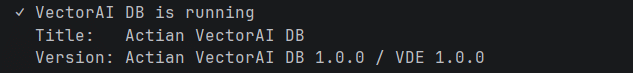

uv add sentence-transformers pypdfCheck that the server is running. Make a file called src/healthcheck.py:

from actian_vectorai import VectorAIClient

with VectorAIClient("localhost:50051") as client:

info = client.health_check()

print(f"✓ VectorAI DB is running")

print(f" Title: {info['title']}")

print(f" Version: {info['version']}")Run the script:

uv run python src/healthcheck.pyTerminal output:

Step 2: Build the ingestion pipeline

Put your PDF maintenance documents in the data/ folder before running this step. Add any equipment maintenance records, inspection reports, or failure logs there.

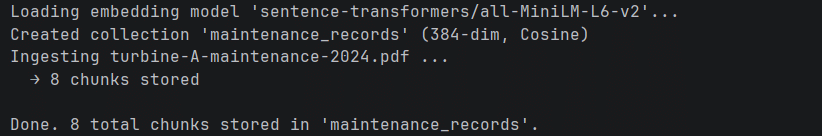

The pipeline uses sentence-transformers/all-MiniLM-L6-v2, which needs less than 200 MB of RAM on CPU. We split text into 256-token chunks with a 25-token overlap to keep enough context for good retrieval.

Create src/ingest.py:

from __future__ import annotations

import argparse

import uuid

from pathlib import Path

from actian_vectorai import Distance, PointStruct, VectorAIClient, VectorParams

from pypdf import PdfReader

from sentence_transformers import SentenceTransformer

from audit import log_ingestion

COLLECTION = "maintenance_records"

HOST = "localhost:50051"

MODEL_NAME = "sentence-transformers/all-MiniLM-L6-v2"

VECTOR_DIM = 384

CHUNK_TOKENS = 256

OVERLAP_TOKENS = 25

def chunk_text(text, tokenizer, chunk_size=CHUNK_TOKENS, overlap=OVERLAP_TOKENS):

token_ids = tokenizer.encode(text, add_special_tokens=False)

chunks = []

start = 0

while start < len(token_ids):

end = min(start + chunk_size, len(token_ids))

window = token_ids[start:end]

decoded = tokenizer.decode(window, skip_special_tokens=True).strip()

if decoded:

chunks.append(decoded)

if end >= len(token_ids):

break

start += chunk_size - overlap

return chunks

def ingest_pdf(pdf_path, equipment_line, doc_date, model, client):

reader = PdfReader(str(pdf_path))

full_text = "\n".join(page.extract_text() or "" for page in reader.pages)

if not full_text.strip():

print(f" [warn] No extractable text in {pdf_path.name}, skipping.")

return 0

tokenizer = model.tokenizer

chunks = chunk_text(full_text, tokenizer)

points = []

for idx, chunk in enumerate(chunks):

embedding = model.encode(chunk, show_progress_bar=False).tolist()

points.append(

PointStruct(

id=str(uuid.uuid5(uuid.NAMESPACE_DNS, f"{pdf_path.name}:{idx}")),

vector=embedding,

payload={

"equipment_line": equipment_line,

"doc_date": doc_date,

"source_file": pdf_path.name,

"text": chunk,

"chunk_index": idx,

},

)

)

if points:

client.points.upsert(COLLECTION, points)

return len(points)

def main(data_dir, equipment_line, doc_date):

data_path = Path(data_dir)

pdfs = sorted(data_path.glob("*.pdf"))

if not pdfs:

print(f"No PDF files found in '{data_dir}'. Add PDFs to ./data/ and retry.")

return

print(f"Loading embedding model '{MODEL_NAME}'...")

model = SentenceTransformer(MODEL_NAME)

with VectorAIClient(HOST) as client:

if not client.collections.exists(COLLECTION):

client.collections.create(

COLLECTION,

vectors_config=VectorParams(size=VECTOR_DIM, distance=Distance.Cosine),

)

print(f"Created collection '{COLLECTION}' ({VECTOR_DIM}-dim, Cosine)")

else:

print(f"Collection '{COLLECTION}' already exists, appending chunks.")

total = 0

for pdf_path in pdfs:

print(f"Ingesting {pdf_path.name} ...")

count = ingest_pdf(pdf_path, equipment_line, doc_date, model, client)

print(f" → {count} chunks stored")

log_ingestion(pdf_path.name, equipment_line, count)

total += count

print(f"\nDone. {total} total chunks stored in '{COLLECTION}'.")

if __name__ == "__main__":

parser = argparse.ArgumentParser(description="Ingest PDFs into VectorAI DB")

parser.add_argument("--data-dir", default="./data")

parser.add_argument("--equipment-line", required=True)

parser.add_argument("--doc-date", required=True)

args = parser.parse_args()

main(args.data_dir, args.equipment_line, args.doc_date)Run the ingestion step:

uv run python src/ingest.py --equipment-line turbine-A --doc-date 2024-03-15Expected output:

The metadata schema saves the equipment line, document date, and source file with each chunk. This lets you filter searches by equipment line or date range without searching the whole collection.

Step 3: Run your first query

Your ingestion pipeline has stored the maintenance records in VectorAI DB. The pipeline can answer questions. When a technician asks something in plain English, the pipeline embeds the question, searches the maintenance_records collection, and returns the top five most relevant chunks with similarity scores.

Create src/query.py:

from __future__ import annotations

import argparse

import time

from actian_vectorai import Field, FilterBuilder, VectorAIClient

from sentence_transformers import SentenceTransformer

from audit import log_query

COLLECTION = "maintenance_records"

HOST = "localhost:50051"

MODEL_NAME = "sentence-transformers/all-MiniLM-L6-v2"

TOP_K = 5

def build_filter(equipment_line=None, doc_date=None, doc_date_to=None):

fb = FilterBuilder()

if equipment_line:

fb.must(Field("equipment_line").eq(equipment_line))

if doc_date and doc_date_to:

fb.must(Field("doc_date").range(gte=doc_date, lte=doc_date_to))

elif doc_date:

fb.must(Field("doc_date").eq(doc_date))

return fb.build() if (equipment_line or doc_date) else None

def search(question, equipment_line=None, doc_date=None, doc_date_to=None):

model = SentenceTransformer(MODEL_NAME)

embedding = model.encode(question, show_progress_bar=False).tolist()

query_filter = build_filter(equipment_line, doc_date, doc_date_to)

with VectorAIClient(HOST) as client:

hits = client.points.search(

COLLECTION,

vector=embedding,

limit=TOP_K,

filter=query_filter,

)

return [

{

"score": round(r.score, 4),

"source_file": r.payload.get("source_file", ""),

"equipment_line": r.payload.get("equipment_line", ""),

"doc_date": r.payload.get("doc_date", ""),

"chunk_index": r.payload.get("chunk_index", -1),

"text": r.payload.get("text", ""),

}

for r in hits

]

def main():

parser = argparse.ArgumentParser(description="Search maintenance records")

parser.add_argument("question", help="Natural language question")

parser.add_argument("--equipment-line", default=None)

parser.add_argument("--doc-date", default=None)

parser.add_argument("--doc-date-to", default=None)

args = parser.parse_args()

start = time.monotonic()

results = search(args.question, equipment_line=args.equipment_line,

doc_date=args.doc_date, doc_date_to=args.doc_date_to)

latency_ms = (time.monotonic() - start) * 1000

log_query(args.question, args.equipment_line or "", results, latency_ms)

if not results:

print("No results found.")

return

print(f"Top {len(results)} results for: \"{args.question}\"\n")

for i, r in enumerate(results, 1):

print(f"[{i}] score={r['score']:.4f} {r['source_file']} "

f"(chunk {r['chunk_index']}) {r['doc_date']} {r['equipment_line']}")

print(f" {r['text'][:200].strip()}...")

print()

if __name__ == "__main__":

main()Try your first query:

uv run python src/query.py "What caused the bearing failure?"The search uses the same model as ingestion to embed the query, keeping both the query and stored vectors in the same semantic space. For maintenance records with this model, similarity scores between 0.4 and 0.6 indicate relevant matches.

Step 4: Add hybrid filters

Filtering by equipment line and date helps keep search results relevant to the technician’s current work. Run the same query from Step 3, but add these filters:

uv run python src/query.py "What caused the bearing failure?" --equipment-line turbine-AAdd a date filter to narrow the results even more:

uv run python src/query.py "What caused the bearing failure?" --equipment-line turbine-A --doc-date 2024-03-15Expected output:

The build_filter function constructs a FilterBuilder query that combines vector similarity with exact metadata matching. A technician working on turbine-A only sees results from that equipment line, not from the entire maintenance history.

Step 5: Connect the local LLM

The search results feed into a local LLM running via Ollama, which generates a cited answer in plain English. The entire round trip runs on factory-floor hardware.

Create src/llm.py:

from __future__ import annotations

import json

import os

import sys

import urllib.request

from typing import Any

OLLAMA_HOST = os.environ.get("OLLAMA_HOST", "http://localhost:11434")

OLLAMA_MODEL = "llama3.2:3b"

MAX_NEW_TOKENS = 256

TEMPERATURE = 0.1

TIMEOUT_SECONDS = 300

def build_prompt(question: str, results: list[dict[str, Any]]) -> str:

if not results:

return f"Question: {question}\n\nAnswer: I have no relevant context to answer this question."

context_blocks = []

for i, r in enumerate(results, 1):

source = r.get("source_file", "unknown")

date = r.get("doc_date", "unknown")

equip = r.get("equipment_line", "unknown")

text = r.get("text", "").strip()

context_blocks.append(

f"[{i}] Source: {source} | Equipment: {equip} | Date: {date}\n{text}"

)

context = "\n\n".join(context_blocks)

return (

"You are a maintenance records assistant. "

"Answer the question using ONLY the provided context. "

"Cite sources inline using [1], [2], etc. "

"If the context does not contain enough information, say so.\n\n"

f"Context:\n{context}\n\n"

f"Question: {question}\n\n"

"Answer:"

)

def generate(question: str, results: list[dict[str, Any]]) -> str:

prompt = build_prompt(question, results)

payload = json.dumps({

"model": OLLAMA_MODEL,

"prompt": prompt,

"stream": False,

"options": {

"num_predict": MAX_NEW_TOKENS,

"temperature": TEMPERATURE,

},

}).encode()

req = urllib.request.Request(

f"{OLLAMA_HOST}/api/generate",

data=payload,

headers={"Content-Type": "application/json"},

method="POST",

)

with urllib.request.urlopen(req, timeout=TIMEOUT_SECONDS) as resp:

body = json.loads(resp.read().decode())

return body["response"].strip()

def answer(question: str, results: list[dict[str, Any]]) -> str:

reply = generate(question, results)

print(reply)

print()

print("Sources")

for i, r in enumerate(results, 1):

print(

f" [{i}] {r.get('source_file', '?')} "

f"(chunk {r.get('chunk_index', '?')}, score {r.get('score', 0):.4f}) "

f"{r.get('doc_date', '?')} / {r.get('equipment_line', '?')}"

)

return reply

if __name__ == "__main__":

question = sys.argv[1] if len(sys.argv) > 1 else "What maintenance was performed?"

dummy_results = [

{

"source_file": "example.pdf",

"doc_date": "2024-03-15",

"equipment_line": "turbine-A",

"chunk_index": 0,

"score": 0.95,

"text": (

"Performed scheduled bearing inspection on turbine-A. "

"Replaced worn bearing race on shaft 2. "

"Torque settings verified per spec TRB-004."

),

}

]

answer(question, dummy_results)Wire everything together by creating src/test_e2e.py:

from query import search

from llm import answer

question = "What maintenance was performed on the gearbox?"

results = search(question, equipment_line="turbine-A")

answer(question, results)Run the full pipeline:

uv run python src/test_e2e.pyllama3.2:3b fits in the memory of a standard factory edge server. The LLM receives only the retrieved chunks as context, not the full document collection, which keeps responses fast and grounded in cited sources.

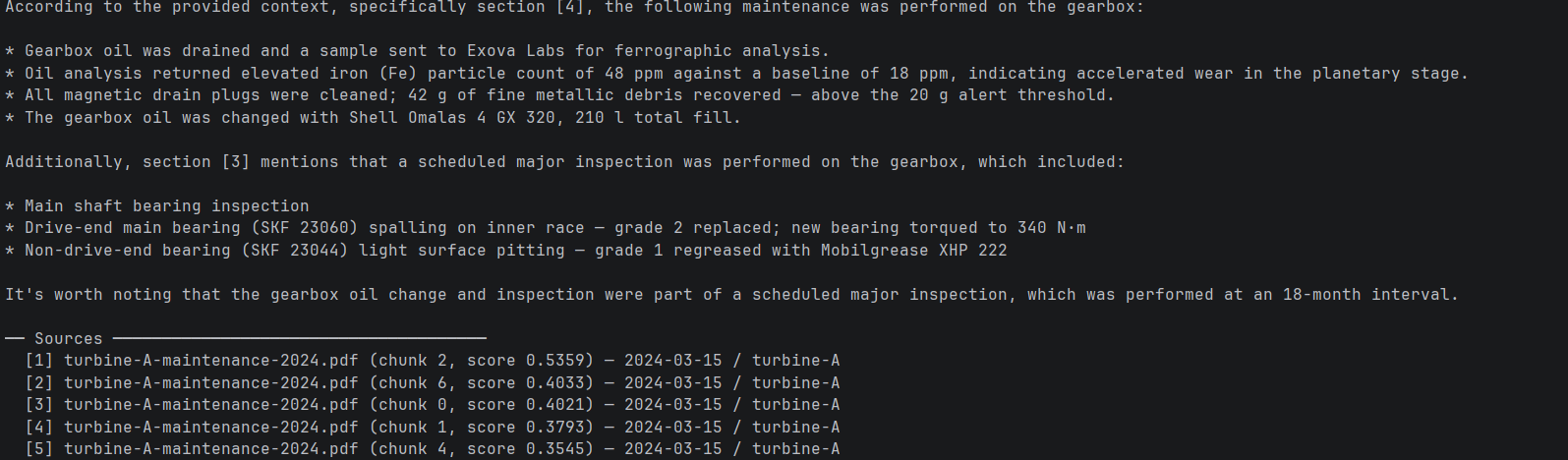

Expected output:

The pipeline is fully up and running. A technician can ask a question, get a cited answer from local maintenance records, and never need to use the internet.

Step 6: Add audit logging

IEC 62443 requires full traceability for every operation within the OT network. Without a local audit trail, your pipeline has no record of what was queried, when, or what it returned.

Create src/audit.py:

from __future__ import annotations

import json

import logging

from datetime import datetime, timezone

from pathlib import Path

LOG_PATH = Path("./data/audit.log")

LOG_PATH.parent.mkdir(parents=True, exist_ok=True)

handler = logging.FileHandler(str(LOG_PATH))

handler.setLevel(logging.INFO)

logger = logging.getLogger("actian_vectorai.audit")

logger.setLevel(logging.INFO)

logger.addHandler(handler)

def log_query(question: str, equipment_line: str, results: list, latency_ms: float) -> None:

entry = {

"event": "query",

"timestamp": datetime.now(tz=timezone.utc).isoformat(),

"question": question,

"equipment_line": equipment_line,

"results_returned": len(results),

"latency_ms": round(latency_ms, 2),

}

logger.info(json.dumps(entry))

def log_ingestion(source_file: str, equipment_line: str, chunks_stored: int) -> None:

entry = {

"event": "ingestion",

"timestamp": datetime.now(tz=timezone.utc).isoformat(),

"source_file": source_file,

"equipment_line": equipment_line,

"chunks_stored": chunks_stored,

}

logger.info(json.dumps(entry))Run the audit script with this command:

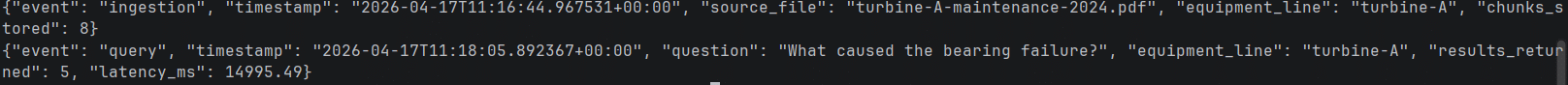

cat data/audit.logExpected output:

The pipeline now keeps a structured record of every ingestion and query event in ./data/audit.log, timestamped in UTC and stored inside your security boundary.

Wrapping Up

You just built a local RAG pipeline that runs entirely on factory-floor hardware, serves queries during network outages, and returns cited answers from decades of maintenance records.

AI in manufacturing can operate without a cloud connection. VectorAI DB enables this by running entirely within the IEC 62443 security boundary, without relying on the cloud. Cut the internet connection entirely, and the pipeline keeps working.

Your pipeline ingests PDF maintenance documents, stores embeddings in the VectorAI DB at Level 2 of your OT network, and answers natural-language questions using a local LLM with no cloud dependency at any step. From here, you can extend the pipeline by adding more document types, tuning the embedding model for your specific equipment vocabulary, adding role-based query filtering by technician, or scaling ingestion across multiple equipment lines.

Find the full VectorAI DB documentation and the GitHub repository to explore further.

Join the community and learn more about Actian.