What is a Data Observability Platform?

Summary

- Data observability platforms monitor and optimize data pipelines.

- Provide proactive anomaly detection, lineage, and root cause analysis.

- Improve data quality, reliability, and decision-making confidence.

- Integrate across data stacks without accessing raw data.

- Enable faster issue resolution and reduced data downtime.

A data observability platform is a comprehensive solution designed to monitor, troubleshoot, and optimize data pipelines and systems. Much like how application performance monitoring (APM) tools oversee software health, a data observability platform ensures the health of data itself, giving organizations confidence in the quality of their data.

How Data Observability Platforms Differ From Traditional Monitoring Tools

Traditional data monitoring tools are often limited in scope. They might check for basic metrics like latency or uptime, but they don’t offer a comprehensive view of the entire data ecosystem. In contrast, a data observability platform provides deep visibility into the state of data across the pipeline, covering ingestion, transformation, storage, and delivery.

Data observability platforms are proactive, not reactive. They don’t just send alerts when something breaks. They identify anomalies, trace the root cause of issues, and even predict potential failures using AI and historical patterns. This holistic, automated approach makes them vastly more effective than legacy tools.

The Importance of Data Observability

Let’s take a brief look at some of the reasons why these platforms are so crucial for organizations in the modern landscape.

Enhancing Data Quality and Reliability

High-quality data is essential for analytics, machine learning, and daily business operations. Data observability platforms continuously monitor for:

- Schema changes

- Null values

- Outliers

- Broken pipelines

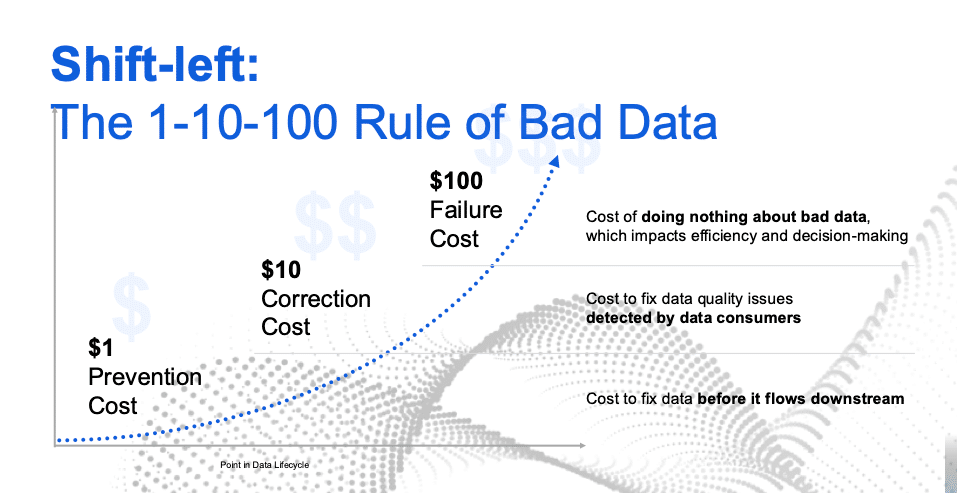

This helps ensure that any deviations from expected behavior are detected early, before data moves downstream. The platforms safeguard data integrity and help teams maintain reliable data environments.

Supporting Data-Driven Decision Making

Organizations increasingly rely on data to drive strategic decisions. If the data feeding into dashboards or machine learning models is flawed, the results can lead to costly mistakes and cause mistrust in data. With a data observability platform in place, teams gain confidence in the data they use, which directly supports smarter, faster decision-making. In turn, the organization can expect better outcomes based on those decisions and predictions.

Key Features of Data Observability Platforms

Every data observability platform will have its own proprietary capabilities and add-ons. However, there are some general features that organizations can expect to find with any good data observability platform.

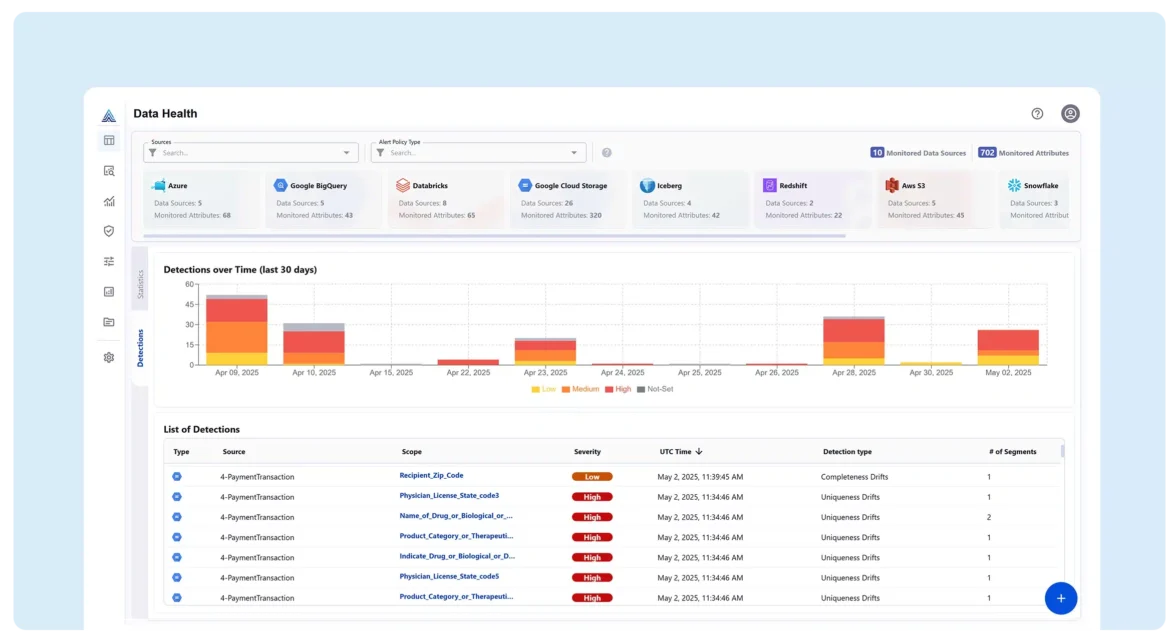

Real-Time Monitoring and Alerts

Real-time insights are a hallmark of any modern data observability platform. These systems provide instant alerts when anomalies are detected, enabling teams to respond before the issue cascades downstream. This capability reduces data downtime and minimizes disruption to business processes.

Data Lineage and Impact Analysis

Understanding where data comes from, how it’s transformed, and where it’s consumed is critical. Data observability platforms offer data lineage visualization, allowing teams to trace the origin and flow of data across the system. When issues arise, they can quickly identify which datasets or dashboards are affected.

Integration With the Existing Data Infrastructure

No two organizations have identical data stacks. A good data observability platform integrates seamlessly with other infrastructure elements, minimizing friction during deployment. This typically includes integration with:

- Popular extract, load, and transform (ELT) tools.

- Cloud data warehouses.

- Business intelligence (BI) tools.

- Data lakes.

System integration ensures that data observability becomes an extension of the organization’s existing data ecosystem, not a siloed solution.

How Data Observability Platforms Work

Each data observability platform will have its own specific processes. However, they all perform a range of functions that can be generalized. Below are steps that typical platforms take regardless of their additional bells and whistles.

Data Collection and Analysis

The platform begins by collecting metadata, logs, metrics, and query histories from various sources in the data stack. This non-invasive approach means the platform doesn’t require direct access to raw data. It then applies machine learning algorithms and heuristic models to analyze patterns, detect anomalies, and predict potential failures.

Identifying and Resolving Data Issues

Once an issue is detected, the platform performs root cause analysis to help teams understand what went wrong and where. Whether it’s a broken transformation job, a schema mismatch, or unexpected values, users can take immediate corrective action, often directly from the platform interface.

Benefits of Data Observability Platforms

Organizations that use data observability platforms experience a wide range of benefits. These platforms help companies maintain good data governance practices, make better business decisions, and reduce the time it takes to fix or resolve any data quality issues that may arise.

Improved Operational Efficiency

By automating the detection and resolution of data issues, teams can spend less time firefighting and more time on value-added tasks. This leads to faster delivery cycles, better resource allocation, and increased productivity across data engineering, analytics, and operations teams.

Reduced Data Downtime

Data downtime, which occurs when data is missing, delayed, or incorrect, can paralyze decision-making. Data observability platforms dramatically reduce downtime by proactively catching and resolving issues quickly, often before business users are even aware of a problem.

Enhanced Collaboration Across Teams

Observability platforms often include shared dashboards, alert capabilities, and audit trails, promoting transparency across data teams. This fosters a culture of collaboration and accountability, enabling engineering, analytics, and business stakeholders to work together more effectively.

Choosing the Right Data Observability Platform

Selecting the right platform depends on several factors:

- Scalability: Can it handle the organization’s volume and velocity of data?

- Ease of integration: Does it fit within the organization’s existing architecture?

- Customizability: Does the platform allow users to tailor alerts, thresholds, and metrics?

- User interface: Is it intuitive for both technical and non-technical users?

- Support and community: Is there a strong network of users and resources?

Look for vendors that offer hands-on demos, free trials, and reference customers in similar industries to guide the buying decision.

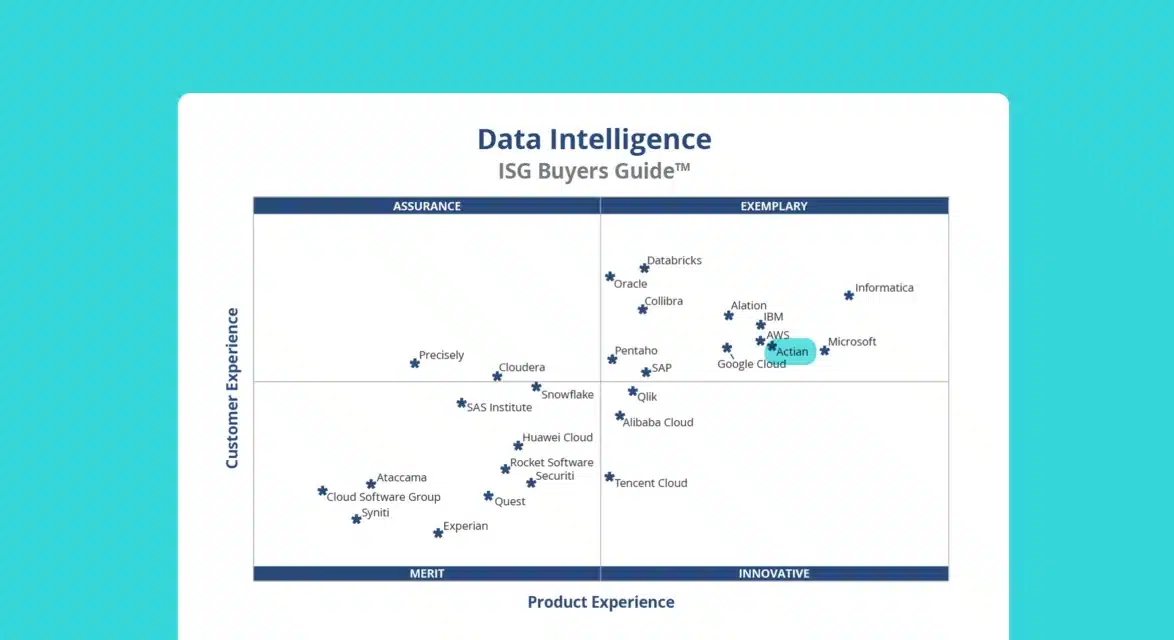

What to Expect With Data Observability Solutions Going Forward

Data observability is a growing market, with many companies starting to work on solutions and tools like comprehensive platforms. Below, we’ve provided a brief list of what to expect in coming years as the need for better, faster, more accurate data observability platforms becomes more pressing.

Possible Future Innovations

The field of data observability is evolving rapidly. Some emerging trends include:

- Automated remediation: Platforms that not only detect problems but fix them autonomously.

- Expanded coverage: Observability expanding beyond data pipelines to include governance, compliance, and usage metrics.

- Unified observability: Consolidating monitoring across data, applications, and infrastructure into a single pane of glass.

More AI and ML to Offset Manual Workloads

AI and machine learning are at the core of next-gen observability platforms. These technologies enable the platform to learn from historical incidents, detect complex anomalies, and forecast potential failures with greater accuracy than manual rules or thresholds ever could. As these models mature, observability will become more predictive than reactive, fundamentally transforming how organizations manage data quality.

Data Observability Platform FAQs

Get the answers to some of the most frequently asked questions about data observability platforms:

Is data observability the same as data quality monitoring?

Not exactly. Data quality monitoring is a component of data observability. While data quality focuses on the condition of the data itself, such as accuracy and completeness, observability also covers pipeline health, infrastructure, lineage, and user impact.

Do I need a separate team to manage a data observability platform?

Not necessarily. Many modern platforms are built to be self-service, with interfaces accessible to data engineers, analysts, and business users. When choosing a data observability platform, factor in issues like its user interface, streamlined design, and whether it has a community of support to provide tips or troubleshooting.

Can a data observability platform integrate with a cloud data warehouse?

Yes, leading platforms offer native integrations with cloud data warehouses, ETL tools, orchestration frameworks, and BI tools. Always confirm compatibility during the evaluation process.

How long does it take to implement a data observability platform?

Depending on the complexity of the data environment, implementation can take anywhere from a few days to several weeks. Most vendors provide onboarding support and customer success teams to guide the rollout. Some vendors, like Actian, offer personalized demonstrations to help acclimatize users to the platform’s many features.

Take a Tour of Actian Data Observability

Actian Data Observability offers enterprise-grade observability with real-time monitoring, lineage tracking, and insights powered by machine learning. It can identify and fix data issues before they impact apps, AI models, or other use cases.

Take a self-guided tour today and discover how Actian Data Observability can help organizations ensure data trust and build reliable AI-ready data products with full visibility and confidence.