It’s Back to the Future for Flat Files – Part 1

Why Embedded Software Application Developers Adopted Flat Files

Recently, my colleague here in Actian Product Marketing, Pradeep Bhanot, wrote a great blog on data historians in which he called for their retirement in favor of more modern databases to support time-series data processing and analytics. But, in some ways, historians are not as historic as one of the most entrenched embedded data management solutions: the flat file. In fact, I suspect that the use of flat files is far more prevalent than the use of databases or historians as a means of embedded data management. It’s hard to prove because analysts don’t track it as a separate category of data management solutions the way they do databases or say cloud data warehouses. But the reality is that they are out there; we encounter customers in our installed base as well as prospects who are actively using flat files – and not just in their older designs.

Why Did We See All the Flat File Adoption in the First Place?

If you’re a developer and you’re writing code to collect operational technology data from sensors and other edge systems, you’re probably writing your code in C, C++, C# or some other programming language that gives you direct access to data ingested from devices. For example, back in the old days when I was an engineer through inp() and outp() statements (or to really date myself through a series of registers addressed in assembly, yikes I think I’m experiencing PTSD). You quickly find that you need somewhere and some way to store the data more permanently than the temporary memory allocation within your program. The path of least resistance is a file. After all, it’s the simplest approach and almost everyone who takes any programming class or teaches it to themselves can use the file system.

Flat Files Were “Good Enough” for Traditional Embedded Data Management

While the above explains why you have the possibility of adoption, it doesn’t detail why flat files were a good solution for the times. Let me give you a couple of key reasons they were good enough:

1. The Silo of Things Meant All Data Collection Was Local

File systems store data locally which was more than sufficient for most data-embedded applications at the edge because they were purely for local use. There wasn’t a need for additional data from parallel data streams let alone fused with other types of data and shared across networks. Thus, stand-alone file systems without network data transfer were good enough. Concerns about streaming data or extract transform and load (ETL) to some other system were not a major showstopper.

2. There Wasn’t That Much Data, Data Processing, or Analytics

Until recently, most operational technology had very limited compute resources: 32-bit or 16-bit microcontrollers, sub-MB DRAM, and limited flash or EPROM memory, etc. If you don’t know these terms, put it this way, these are your father’s Oldsmobile. With limited resources available, most software was there to perform direct control of the device against a specific process, and data collected was mostly in support of that process, not the instrumentation of the process or for analytics to inform current or future operations of that process.

3. It’s My Data, I’m the Only One Using it, so Buzz Off

Software development specs? Comments, who needs comments? OT Developers are often the only parties using the software they develop and the data generated by their code is seen generally only by them and possibly a few experts in test validation on one end and service and support on the other end. Again, because the data was generated by them and for them, the need to share that data with a business analyst or data scientist back at headquarters let alone line of business would have seemed a bit far-fetched. Traditional IT and cybersecurity professionals in the data center would not be asked nor feel the need to force themselves into these projects.

Respect the Legacy, but Move Towards the Future

I get it, I used to be one of these OT engineers myself as I alluded to above. There are some advantages if you’re a software developer to starting with file systems but, with the increasingly hyperconnected world for edge devices today – aka IoT, far more resources, heck I can get a Raspberry Pi for less than a fancy real pie, and the need to share data to push business agility, innovation, and make OT more responsive and less expensive, there is a need for change. In the next installment in this series we’ll talk about why OT software developers are loathed to let go of their flat file systems and move to modern edge data management systems.

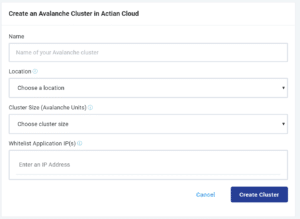

Actian is the industry leader in operational data warehouse and edge data management solutions for modern businesses. With a complete set of solutions to help you manage data on-premises, in the cloud, and at the edge with Mobile and IoT. Actian can help you develop the technical foundation needed to support true business agility. To learn more, visit www.actian.com.