Streamlining the Chaos: Conquering Manufacturing With Data

Kasey Nolan

July 2, 2024

The Complexity of Modern Manufacturing

Manufacturing today is far from the straightforward assembly lines of the past; it is chaos incarnate. Each stage in the manufacturing process comes with its own set of data points. Raw materials, production schedules, machine operations, quality control, and logistics all generate vast amounts of data, and managing this data effectively can be the difference between smooth operations and a breakdown in the process.

Data integration is a powerful way to conquer the chaos of modern manufacturing. It’s the process of combining data from diverse sources into a unified view, providing a holistic picture of the entire manufacturing process. This involves collecting data from various systems, such as Enterprise Resource Planning (ERP) systems, Manufacturing Execution Systems (MES), and Internet of Things (IoT) devices. When this data is integrated and analyzed cohesively, it can lead to significant improvements in efficiency, decision-making, and overall productivity.

The Power of a Unified Data Platform

A robust data platform is essential for effective data integration and should encompass analytics, data warehousing, and seamless integration capabilities. Let’s break down these components and see how they contribute to conquering the manufacturing chaos.

1. Analytics: Turning Data into Insights

Data without analysis is like raw material without a blueprint. Advanced analytics tools can sift through the vast amounts of data generated in manufacturing, identifying patterns and trends that might otherwise go unnoticed. Predictive analytics, for example, can forecast equipment failures before they happen, allowing for proactive maintenance and reducing downtime.

Analytics can also optimize production schedules by analyzing historical data and predicting future demand. This ensures that resources are allocated efficiently, minimizing waste and maximizing output. Additionally, quality control can be enhanced by analyzing data from different stages of the production process, identifying defects early, and implementing corrective measures.

2. Data Warehousing: A Central Repository

A data warehouse serves as a central repository where integrated data is stored. This centralized approach ensures that all relevant data is easily accessible, enabling comprehensive analysis and reporting. In manufacturing, a data warehouse can consolidate information from various departments, providing a single source of truth.

For instance, production data, inventory levels, and sales forecasts can be stored in the data warehouse. This unified view allows manufacturers to make informed decisions based on real-time data. If there’s a sudden spike in demand, the data warehouse can provide insights into inventory levels, production capacity, and lead times, enabling quick adjustments to meet the demand.

3. Integration: Bridging the Gaps

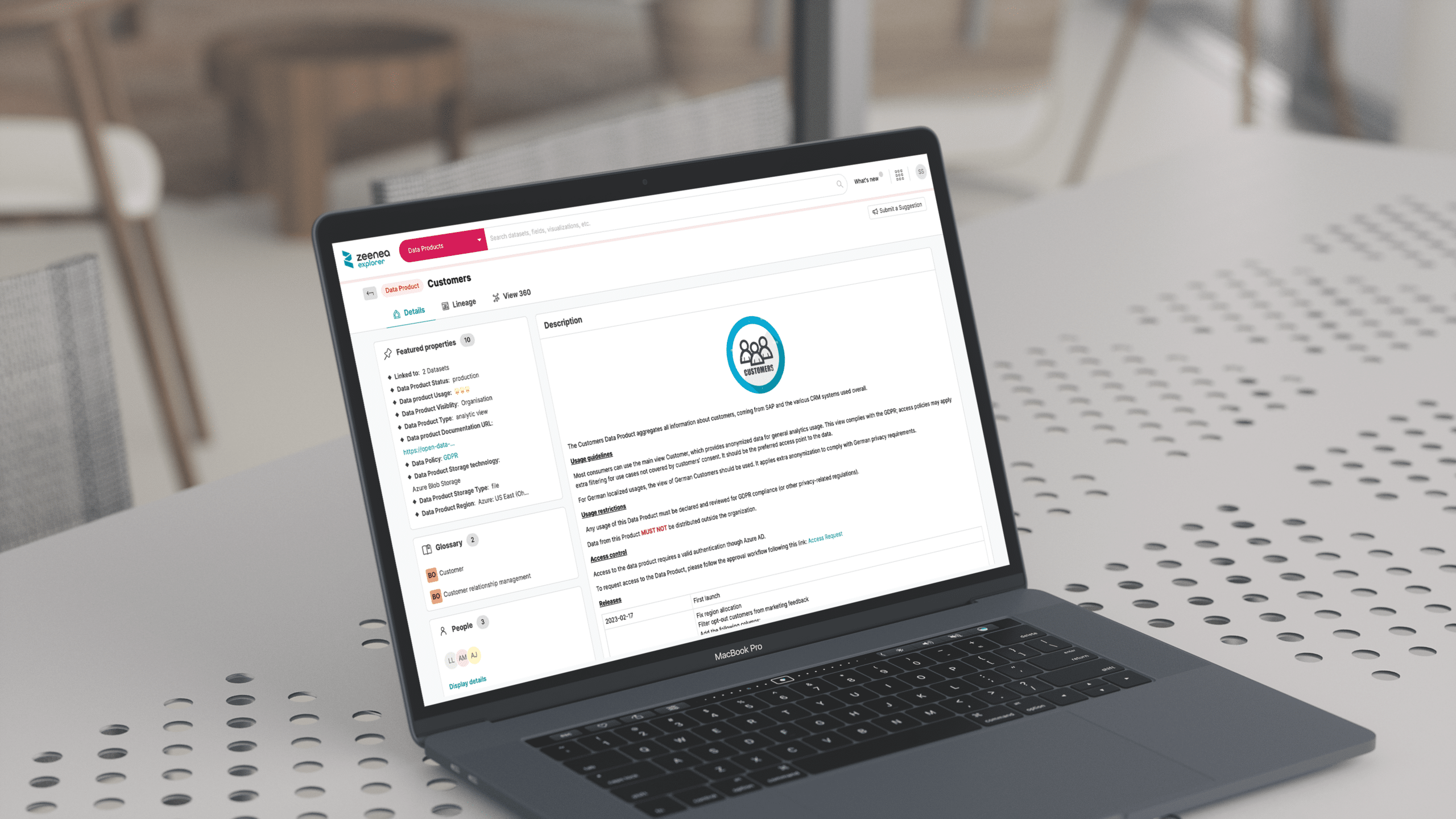

Integration is the linchpin that holds everything together. It involves connecting various data sources and ensuring data flows seamlessly between them. In a manufacturing setting, integration can connect systems like ERP, MES, and Customer Relationship Management (CRM), creating a cohesive data ecosystem.

For example, integrating ERP and MES systems can provide a real-time view of production status, inventory levels, and order fulfillment. This integration eliminates data silos, ensuring that everyone in the organization has access to the same accurate information. It also streamlines workflows, as data doesn’t need to be manually transferred between systems, reducing the risk of errors and saving time.

Case Study: Aeriz

Aeriz is a national aeroponic cannabis brand that provides patients and enthusiasts with the purest tasting, burning, and feeling cultivated cannabis. They needed to be able to connect, manage, and analyze data from several systems, both on-premises and in the cloud, and access data that was not easy to gather from their primary tracking system.

By leveraging the Actian Data Platform, Aeriz was able to access data that wasn’t part of the canned reports provided by their third-party vendors. They were able to easily aggregate this data with Salesforce to improve inventory visibility and accelerate their order-to-cash timeline.

The result was an 80%-time savings of a full-time employee responsible for locating and aggregating data for business reporting. Aeriz can now focus resources on analyzing data to find improvements and efficiencies to accommodate rapid growth.

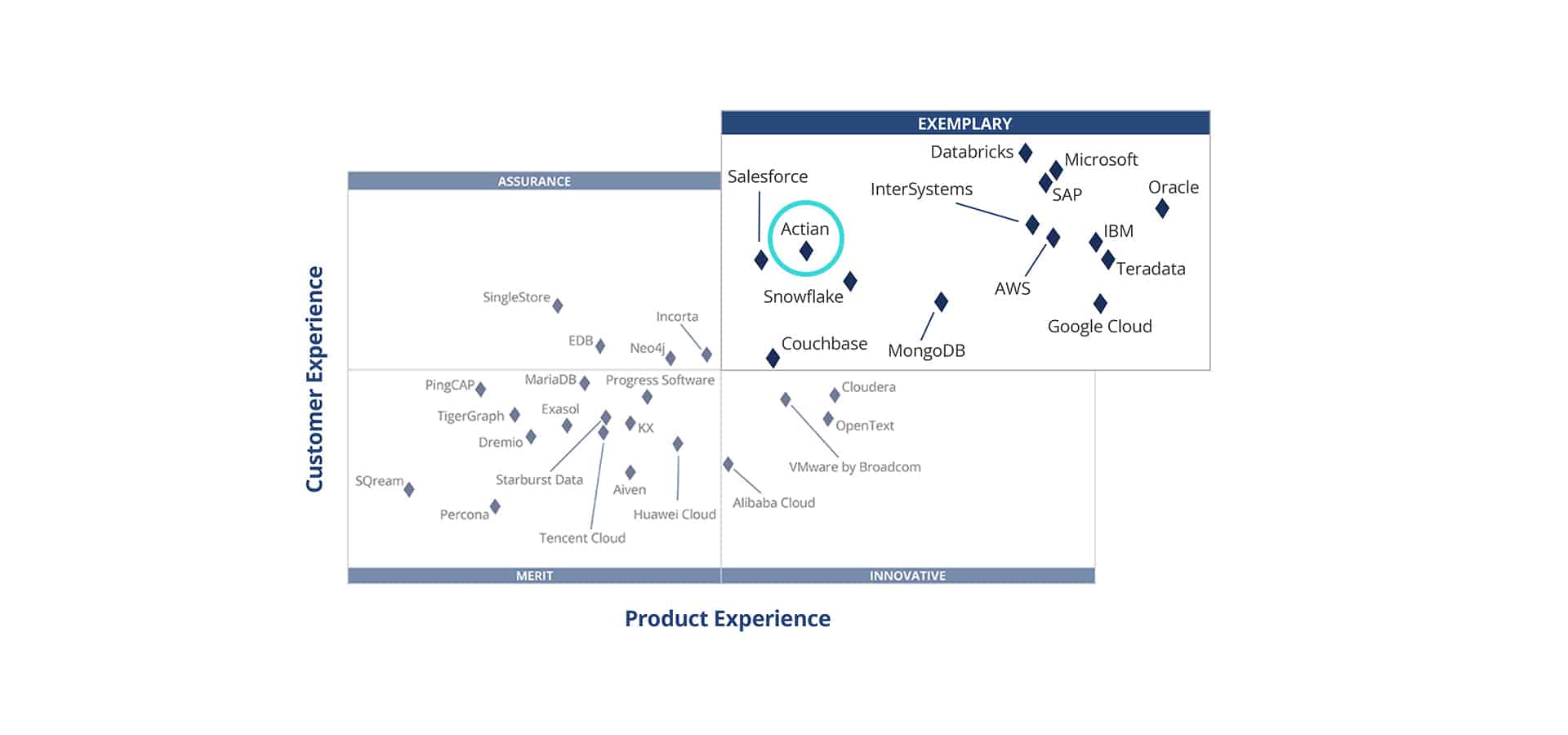

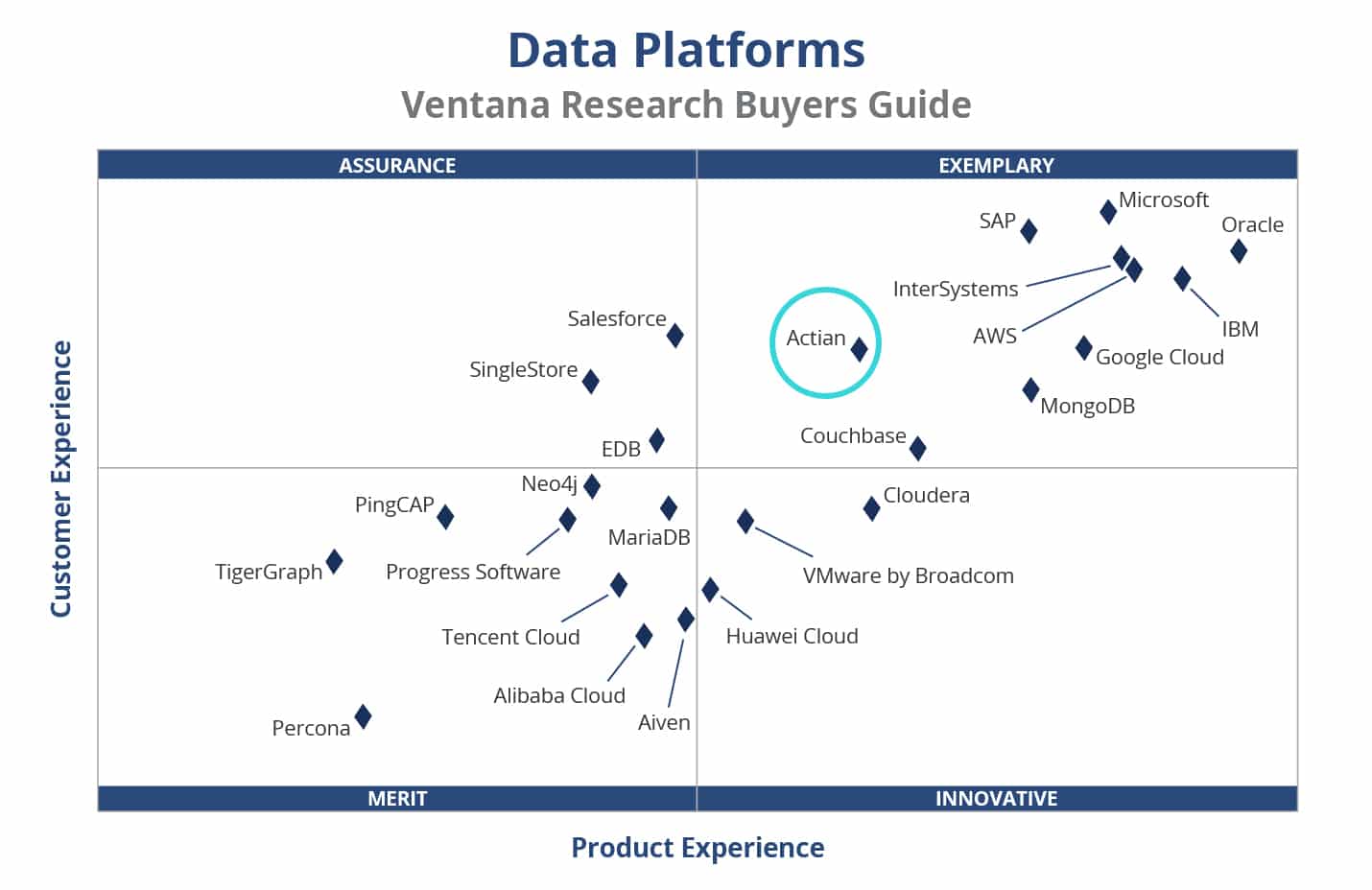

The Actian Data Platform for Manufacturing

Imagine having the ability to foresee equipment failures before they happen? Or being able to adjust production lines based on live demand forecasts? Enter the Actian Data Platform, a powerhouse designed to tackle the complexities of manufacturing data head-on. The Actian Data Platform transforms your raw data into actionable intelligence, empowering manufacturers to make smarter, faster decisions.

But it doesn’t stop there. The Actian Data Platform’s robust data warehousing capabilities ensure that all your critical data is centralized, accessible, and ready for deep analysis. Coupled with seamless integration features, this platform breaks down data silos and ensures a cohesive flow of information across all your systems. From the shop floor to the executive suite, everyone operates with the same up-to-date information, fostering collaboration and efficiency like never before. With Actian, chaos turns to clarity and complexity becomes a competitive advantage.

Embracing the Future of Manufacturing

Imagine analytics that predict the future, a data warehouse that’s your lone source of truth, and integration that connects it all seamlessly. This isn’t just about managing chaos—it’s about turning data into a well-choreographed dance of efficiency and productivity. By embracing the power of data, you can watch your manufacturing operations transform into a precision machine that’s ready to conquer any challenge!

Subscribe to the Actian Blog

Subscribe to Actian’s blog to get data insights delivered right to you.

- Stay in the know – Get the latest in data analytics pushed directly to your inbox.

- Never miss a post – You’ll receive automatic email updates to let you know when new posts are live.

- It’s all up to you – Change your delivery preferences to suit your needs.

Subscribe

(i.e. sales@..., support@...)