Data and AI Observability for AI-Driven Decisions

Summary

- Explains why data and AI observability are critical for reliable, fair, and accurate AI-driven decisions.

- Defines the five pillars of data observability: freshness, volume, schema, distribution, and lineage.

- Outlines how Actian Data Observability connects, monitors, alerts, and remediates data issues.

- Highlights ML-driven anomaly detection, no-code monitoring, and human-in-the-loop collaboration.

- Showcases Actian’s secure, scalable, and compliant observability architecture.

Organizations are increasingly relying on artificial intelligence (AI) to oversee or assist in managing data systems that inform decisions, drive automation, and enhance customer experiences. As these systems become more complex, observability (the ability to monitor, understand, and troubleshoot data and AI pipelines) has emerged as a critical concept. Data and AI observability ensure not only the health of systems but also the reliability, accuracy, and fairness of the insights they generate.

Understanding Data Observability

Data observability refers to comprehensive visibility into the health and performance of data systems, encompassing data pipelines, quality, lineage, and infrastructure. At its core, it enables teams to detect and diagnose problems within data workflows before they impact downstream applications and users.

This form of observability is essential in managing modern data architectures where data flows through multiple stages—from ingestion to transformation to analysis. When any part of this flow is compromised, such as through data corruption, schema changes, or delays, observability tools alert teams, enabling them to respond proactively.

These are the five pillars of data observability.

- Freshness: Ensures that data is up to date and delivered on time. This pillar helps teams monitor latency and identify when pipelines are delayed or failing.

- Volume: Tracks the amount of data moving through pipelines to detect anomalies such as missing records or unexpected spikes, which may indicate upstream issues.

- Schema: Observes changes to data structure, including column additions, deletions, or type changes, which can break downstream processes if not properly managed.

- Distribution: Analyzes statistical properties of data (e.g., mean, min, max) to spot outliers or data drift that could signal data integrity problems.

- Lineage: Provides visibility into data flow and dependencies, enabling teams to trace issues to their source and understand the impact of changes across systems.

How Actian Data Observability Works

AI is not only a subject of observability, but a central component in the process. Through Actian Data Observability, organizations can ensure the overall health of their data systems and avoid cloud cost surges by leveraging Apache Spark-based, purpose-built cloud data architecture. Here’s how the process works.

Step One: Connection to Data Sources

Actian’s data observability system connects to organizations’ existing data infrastructure, including data lakes, warehouses, and lakehouses. This includes over 250 connections to help ensure widespread data ingestion and observability over all data streams.

“No-code” connection allows native support for raw and open table data formats as well, such as:

- Iceberg

- Hudi

- Delta

Step Two: Continuous Data Monitoring

Once the system is connected to all sources of data, it performs comprehensive and continuous monitoring of the data ecosystem. Data health is analyzed and assessed according to the five pillars of data observability listed above.

What makes this process different with Actian is:

- No-code analysis and reporting on data health.

- Data lineage analysis for quick anomaly detection.

- Anomaly detection driven by machine learning (ML)

Step Three: Alerts and Human-in-Loop Collaboration

The third step in the process involves triage alerts. When any issue arises, the system sends alerts to the organization. Teams then become involved in the human-in-the-loop part of the process, working with the AI to resolve the problems.

Step Four: Issue Remediation

Finally, the AI and human elements of the system work together to fine-tune data contracts (ensuring future consistency in formatting), manually fix data issues, or adjust the data quality workflow to both correct problems and avoid future ones.

Key Components of Actian Data Observability

Now that we’ve discussed the steps in the ML-driven data observability process, let’s talk about a few of the key features of Actian’s product.

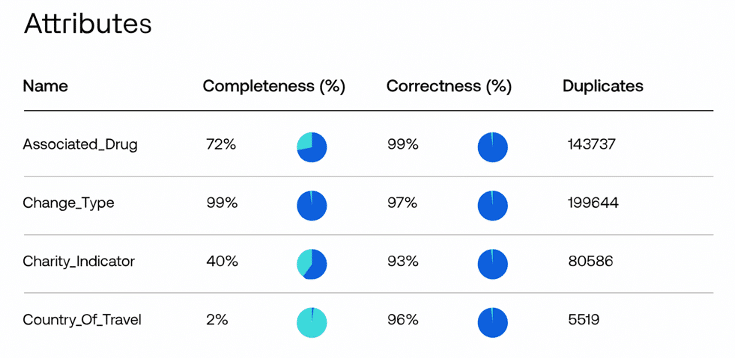

Data Health Dashboards and Quality Reports

Data health dashboards provide a high-level macroscopic view of every facet of the data pipeline. With automated data quality reports, teams receive key information on KPIs without the need for extended setup time. Additionally, they enable the automation of data quality workflows for scalable AI workloads.

Consistency Through Data Layers

Actian Data Observability monitors data at all stages and layers, including bronze, silver, and gold layers. This helps catch potential issues in the bronze layer before they are propagated downstream in the silver and gold layers. Prevent problems in the consumption part of the data pipeline by identifying and addressing these issues early.

Open, Scalable Architecture

Scalable cloud-based architecture means that organizations using Actian Data Observability don’t have to worry about cloud computing surges or spikes. In addition, the system fully integrates with over 250 data sources (both modern and legacy sources), third-party data catalogs, and engines for ticketing, workflow, and orchestration.

Security and Compliance

Security and compliance are top concerns for most organizations when it comes to any data product or service. Actian utilizes a Virtual Private Cloud (VPC) to maintain all data within the organization’s virtual environment, which is secured through both at-rest and in-transit encryption. This approach ensures data safety through role-based permissions and authentication. Using AI observability, organizations can help ensure they stay compliant with industry regulations, including GDPR, CCPA, HIPAA, and PCI-DSS.

Enhancing AI Observability With Actian

Actian offers tools that enhance AI observability by enabling real-time data processing, robust integration capabilities, and intelligent analytics. With Actian Data Observability, businesses can unify data sources and monitor AI performance with greater clarity and control. Schedule a full demonstration today.