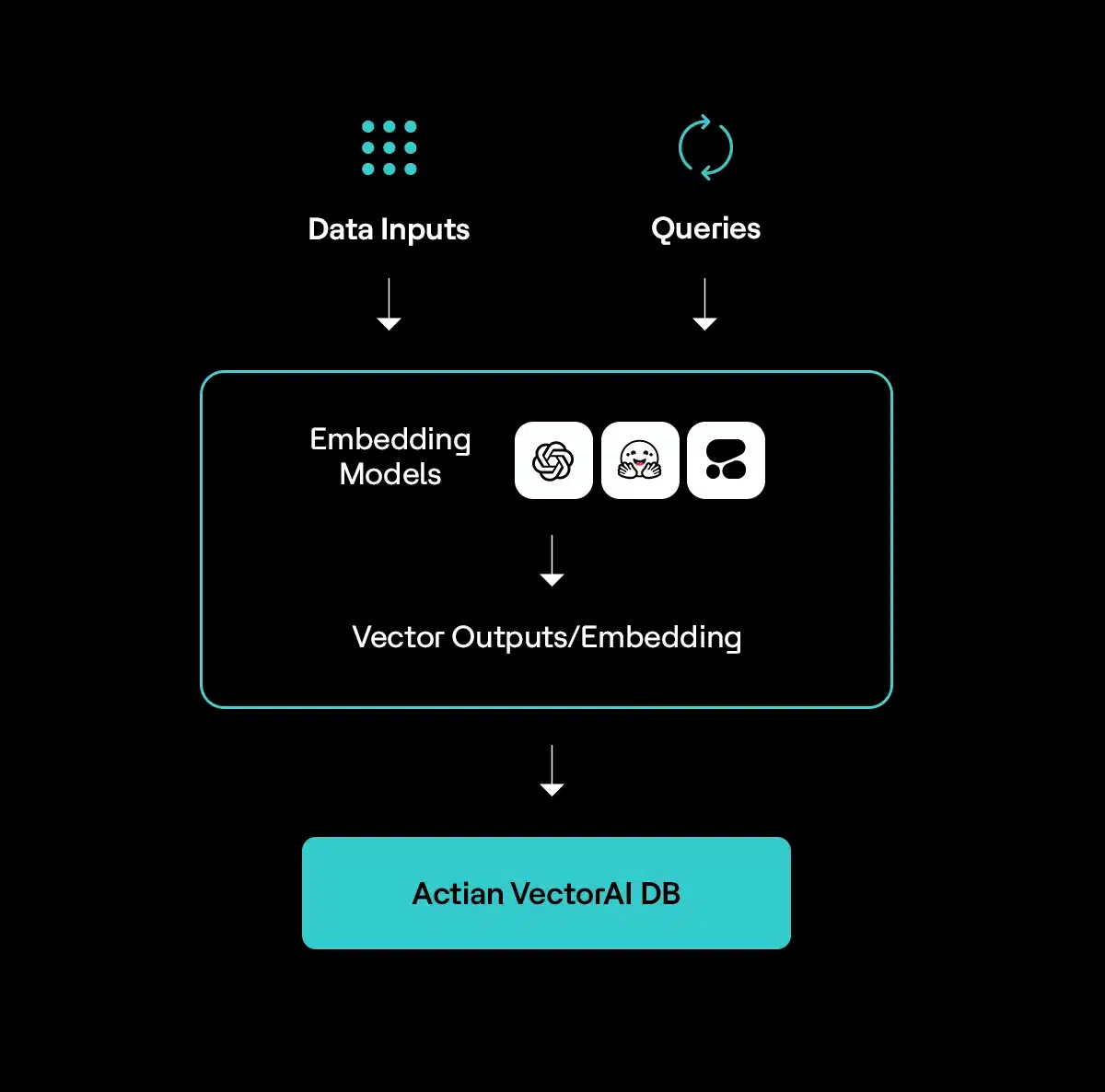

Vector database built for edge and on-premises

Get early access

(i.e. sales@..., support@...)

Build locally, deploy securely, and scale with your stack

Retrieval accuracy stays above 99.4% from 1M to 10M vectors. No accuracy tradeoff as your dataset grows.

Cloud vector DBs weren’t built for edge use cases

Network latency blocks real-time applications

Cloud round-trips add 200-400ms to every query you run. You can’t build sub-100ms applications when your database contributes most of the latency.

Third-party infrastructure blocks regulated deployments

HIPAA and GDPR require your data to stay within your control. Cloud services introduce third-party processing that fails your compliance requirements.

Cloud-only architecture blocks entire deployment scenarios

Your edge devices, disconnected environments, and embedded systems can’t assume reliable internet. Cloud databases leave entire classes of your AI applications unaddressed.

Built for developers at the edge

Build, test, and run AI where your data actually lives

Edge AI engineers

Build autonomous systems, robotics, and IoT applications that need vector search on resource-constrained devices.

Deploy to: NVIDIA Jetson, Raspberry Pi, industrial edge servers

Manufacturing teams

Run AI in disconnected factory environments for predictive maintenance, quality inspection, and production optimization.

Deploy to: Air-gapped facilities, plant floors, production lines

Build HIPAA-compliant AI that keeps patient data on-premises for clinical decision support, medical imaging, and record search.

Deploy to: Hospital data centers, clinic servers, research facilities

Manage vector search across distributed sites like retail, branch offices, and multi-region deployments.

Deploy to: Hybrid environments, edge + cloud, multi-site infrastructure

FAQ

Yes. Use the same APIs from laptop to data center. Build locally, deploy anywhere.