What is a Data Pipeline?

A data pipeline is a set of processing steps that move data from a source to a destination system. The steps of the data pipeline are sequential because the output from one step is the input of subsequent steps. The data processing within each step can be done in parallel to reduce processing time. The first step of the data pipeline is typically ingestion. The final step is an insert or load into a real-time data analytics database.

Data pipelines control the flow of data as a well-defined process that supports data governance. They also create opportunities for reuse when building future pipelines. Reusable components can be refined over time, resulting in faster deployment and improved reliability. Data pipelines allow the entire data flow to be instrumented and centrally monitored to reduce management overheads. Data flow automation also reduces busy work.

Data Pipeline Example

Data pipeline steps will vary based on the data type and tools used. A representative sequence of steps for identifying suitable sources and data pipeline process steps is listed below:

- Data Identification – Data catalogs help identify potential data sources for the required analysis. In general, the pipeline is used to populate a specific data warehouse, such as a customer data platform for which the data sources are well known. Data catalogs also contain metadata about the data’s quality and trustworthiness, which can be used as selection criteria.

- Profiling – Profiling helps to understand data formats and generate appropriate scripts for data ingestion. Raw data must sometimes be exported into the comma-delimited format as direct access is challenging.

- Data Ingestion – Data sources can include operational systems, web clicks, social media posts, and log files. Data integration technology can provide predefined connectors, batch, and streaming APIs. Semi-structured files may need special streamed JSON or XML record formats. Ingestion can happen as batches, micro-batches as records are created as streams.

- Normalization – Duplicates can be filtered out, and gaps filled with default or calculated values. Data can be sorted into primary key order, later becoming the natural key for a columnar database table. Outliers and null values can be addressed in this step.

- Formatting – Data has to be made consistent using a uniform format. Format challenges include how U.S. states are written, spelled out, or as a pair of letters.

- Merging – Multiple files may be needed to construct a single record. Any clashes must be managed during the data merge and reconciliation step.

- Loading – The analytics repository or database is the usual target for this final data pipeline step. Parallel loaders can be used to load data as multiple streams. The input file must be split before a parallel load to avoid the single file being a performance bottleneck. Adequate CPU cores must be allocated to the load to maximize throughput and reduce the total elapsed time for the load operation.

Essentials for a Robust Data Pipeline

Below are some desirable characteristics of the technology platform that the data pipeline uses:

-

- Hybrid-cloud deployment on-prem and cloud.

- Works with CDC tools to sync with the data sources.

- Multiple cloud provider support.

- Support for legacy big data file formats such as Hadoop.

- Data integration technology includes connectors to popular data sources.

- Monitoring tools to view and execute data pipeline steps.

- Parallel processing within each step in the pipeline.

- Data profiling technology to construct big data workflows faster.

- ETL and ELT capabilities so data can be manipulated inside and outside the target data warehouse.

- Data transformation functions.

- Default value generation.

- Exception handling for failed processes.

- Data integrity checking to validate completeness at the end of each step.

- Graphical tools to construct pipelines.

- Ease of maintenance.

- Encryption of data at rest and in flight.

- Data masking for compliance.

Benefits of Using Data Pipelines

Some of the benefits of using a data pipeline include the following:

- Pipelines promote component reuse and stepwise refinement.

- Allows the end-to-end process to be instrumented, monitored, and managed. Failed steps can then be alerted, mitigated, and retried.

- Reuse accelerates pipeline development and test times.

- Data source utilization can be monitored so that unused data can be retired.

- The use of data can be cataloged, as well as consumers.

- Future data integration projects can assess existing pipelines for bus or hub-based connections.

- Data pipelines promote data quality and data governance.

- Robust data pipelines lead to better-informed decisions.

Data Pipelines in Actian

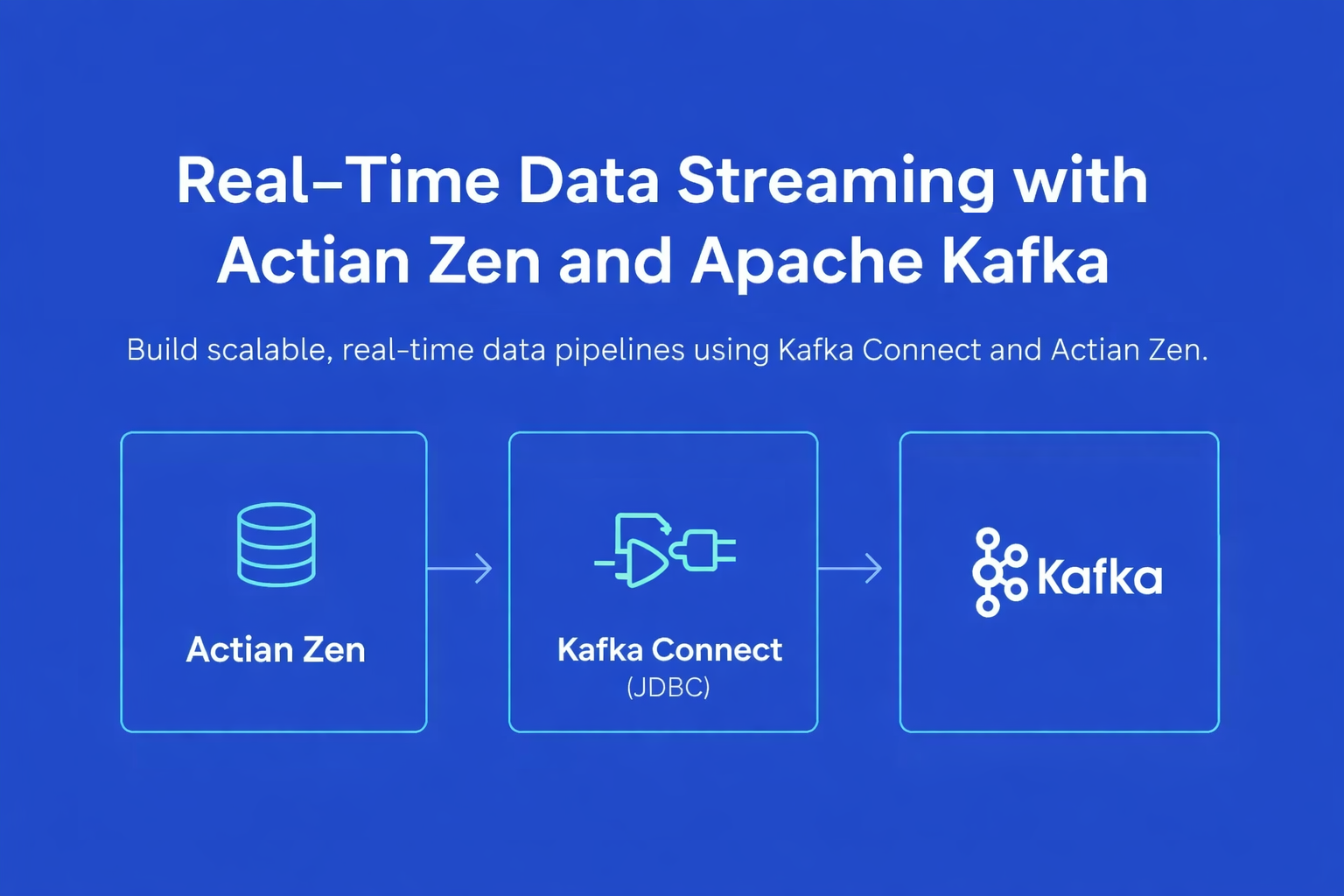

Actian Data Intelligence Platform is purpose-built to help organizations unify, manage, and understand their data across hybrid environments. It brings together metadata management, governance, lineage, quality monitoring, and automation in a single platform. This enables teams to see where data comes from, how it’s used, and whether it meets internal and external requirements.

Through its centralized interface, Actian supports real-time insight into data structures and flows, making it easier to apply policies, resolve issues, and collaborate across departments. The platform also helps connect data to business context, enabling teams to use data more effectively and responsibly. Actian’s platform is designed to scale with evolving data ecosystems, supporting consistent, intelligent, and secure data use across the enterprise. Request your personalized demo.

Key Takeaways

FAQ

A data pipeline is a structured series of processes that move data from source systems to destinations such as databases, data warehouses, data lakes, or analytics platforms. It handles extraction, transformation, validation, and delivery.

Most data pipelines include data ingestion, connectors, transformation (ETL/ELT), workflow orchestration, data quality checks, storage layers, and output delivery to analytics or operational systems.

“ETL” is a process that involves extracting, transforming, and loading information. Rather than being different from data pipelines, the ETL process is simply on way that a data pipeline can get data from the source to its eventual destination.

The main stages of a data pipeline are sourcing, processing, and loading. This essentially means finding the source of information, processing that information to be in alignment with the way you store your data, and transferring that information to its destination.

Innovations can take data pipelines in a variety of new directions. Currently, the anticipated future will include more integration of artificial intelligence (AI), the decentralization of data

storage for easier accessibility and rapid scalability, and the introduction of serverless computing models.