What’s an Edge Data Fabric?

What’s an Edge Data Fabric?

A data fabric is a data architecture, management practices, and policies to deliver a set of data services that span all these domains and endpoints. Data fabrics provide that framework. They essentially serve as both the translator and the plumbing for data in all its forms, wherever it sits and wherever it needs to go, regardless of whether the data consumer is a human or machine.

Data fabrics aren’t brand new, but they are suddenly getting a lot of attention in IT these days as companies move to multi-cloud and the edge. That’s because organizations desperately need a framework to manage it – to move it, secure it, prepare it, govern it, and integrate it into IT systems.

Data fabrics got their start back in the mid-2000s when computing started to spread from data centers into the cloud. They became more popular as organizations embraced hybrid clouds, and today data fabrics are helping to reduce complexities involving data streams moving to and from the network’s edge. But the goalposts have moved, the network’s edge is now the IoT, collectively labeled “the edge.”

What’s different is where the data will emanate from and how fluid it will be. In other words, mobile and IoT – the edge – will drive data creation. Further, the processing and analysis will happen at various points from on the device, at the gateways, and across the cloud. Perhaps a better term would be Fluid Distributed Data instead of Big Data?

Regardless, more data ultimately translates to more viable business opportunities – particularly given that this new data is generated at the point of action from humans and machines. To take full advantage of the growing amounts of data available to them, enterprises need a way to manage it more efficiently across platforms, from the edge to the cloud and back. They need to process, store, and optimize different types of data that come from different sources with different levels of cleanliness and validity so they can connect it to internal applications and apply business process logic, increasingly aided by artificial intelligence and machine learning models.

It’s a big challenge. One solution enterprises are pursuing now is the adoption of a data fabric. And, as data volumes continue to grow at the network’s edge, that solution will evolve further into what will more commonly be referred to as an edge data fabric.

How Data Fabric Applies to the Edge

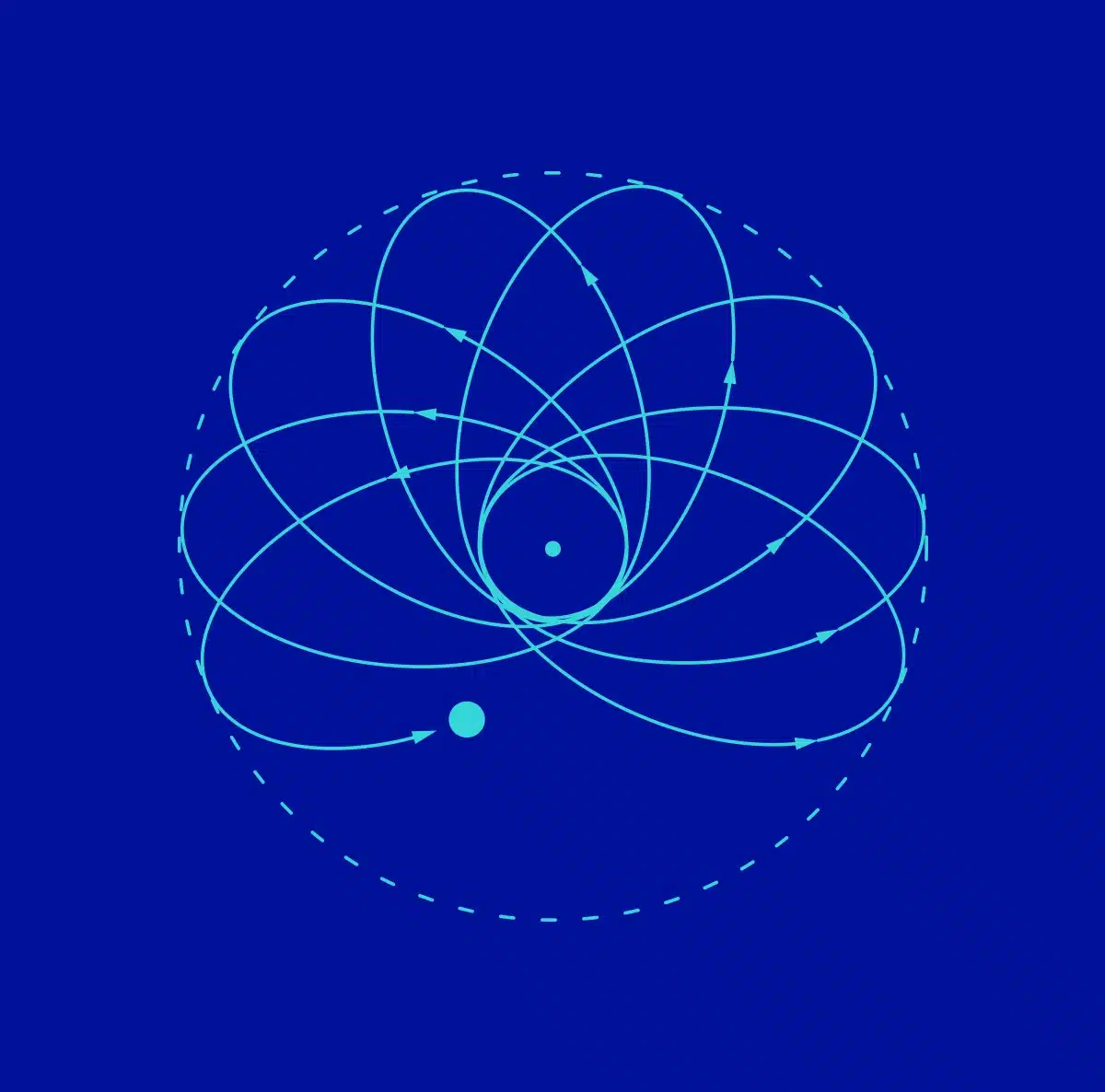

Edge computing provides a unique set of challenges for data being generated and processed outside the network core. The devices themselves operating at the edge are getting more complex. Smart devices like networked PLCs manage solenoids that, in turn, control process flows in a chemical plant, pressure sensors that determine the weight and active RFID tags to determine the location of a cargo container. The vast majority of the processing used to take place in the data center, but that has shifted to the point where a larger portion of the processing takes place in the cloud. In both cases, the processing happens on one side of a gateway. The data center was fixed, not virtual, but the cloud is fluid. If you consider the definition of cloud, you can see why a data fabric would be needed in it. Cloud is about fluidity and removing locality, but, like the data center, it’s about processing data associated with applications. We may not care where the Salesforce cloud or Oracle cloud or any other cloud is actually located but we do care that my data must transit between various clouds and persist in each of them for use in different operations.

Because of all that complexity, organizations have to determine which pieces of the processing are done at which level. There’s an application for each, and for each application there’s a manipulation. And for each manipulation, there’s processing of data and memory management.

The point of a data fabric is to handle all the complexity. Spark, for example, would be a key element of a data fabric in the cloud, as it quickly has become the easiest way to support streaming data between various cloud platforms from different vendors. The edge is quickly becoming a new cloud, leveraging the same cloud technologies and standards in combination with new, edge-specific networks such as 5G and WLAN 6. And, like the core cloud, there are richer, more intelligent applications running on each device, on gateways, and at what would have been the equivalent of data center running in a coat closet on the factory floor, in an airplane, on a cargo ship and so forth. It stands to reason you will need an analogous edge data fabric to the one that is solidifying in the core cloud.

Edge Data Fabric’s Common Elements

To handle the growing number of data requirements edge devices pose, an edge data fabric has to perform several important functions. It has to be able to:

- Access to many different interfaces: http, mttp, radio networks, manufacturing networks.

- Run on multiple operating environments: Most importantly POSIX compliant.

- Work with key protocols and APIs: Including more recent ones with REST API.

- Provide JDBC/ODBC database connectivity: For legacy applications and a quick and dirty connection between databases.

- Handle streaming data: Through standards such as Spark and Kafka.

Conclusion

Data fabric is not a single product, platform, or set of services and neither is edge data fabric. Edge data fabric is an extension of data fabric but, given the differences in resources and requirements at the edge, sufficient change to what is necessary to manage edge data is required. In the next blog we’ll discuss why edge data fabric matters and why now.