What Today’s Data Events Reveal About Tomorrow’s Enterprise Priorities

Liz Brown

July 1, 2025

After attending several industry events over the last few months—from Gartner® Data & Analytics Summit in Orlando to the Databricks Data + AI Summit in San Francisco to regional conferences—it’s clear that some themes are becoming prevalent for enterprises across all industries. For example, artificial intelligence (AI) is no longer a buzzword dropped into conversations—it is the conversation.

Granted, we’ve been hearing about AI and GenAI for the last few years, but the presentations, booth messaging, sessions, and discussions at events have quickly evolved as organizations are now implementing actual use cases. Not surprisingly, at least to those of us who have advocated for data quality at scale throughout our careers, the launch of AI use cases has given rise to a familiar but growing challenge. That challenge is ensuring data quality and governance for the extremely large volumes of data that companies are managing for AI and other uses.

As someone who’s fortunate enough to spend a lot of time meeting with data and business leaders at conferences, I have a front-row seat to what’s resonating and what’s still frustrating organizations in their data ecosystems. Here are five key takeaways:

1. AI has a Data Problem, and Everyone Knows It

At every event I’ve attended recently, a familiar phrase kept coming up: “garbage in, garbage out.” Organizations are excited about AI’s potential, but they’re worried about the quality of the data feeding their models. We’ve moved from talking about building and fine-tuning models to talking about data readiness, specifically how to ensure data is clean, governed, and AI-ready to deliver trusted outcomes.

“Garbage in, garbage out” is an old adage, but it holds true today, especially as enterprises look to optimize AI across their business. Data and analytics leaders are emphasizing the importance of data governance, metadata, and trust. They’re realizing that data quality issues can quickly cause major downstream issues that are time-consuming and expensive to fix. The fact is everyone is investing or looking to invest in AI. Now the race is on to ensure those investments pay off, which requires quality data.

2. Old Data Challenges are Now Bigger and Move Faster

Issues such as data governance and data quality aren’t new. The difference is that they have now been amplified by the scale and speed of today’s enterprise data environments. Fifteen years ago, if something went wrong with a data pipeline, maybe a report was late. Today, one data quality issue can cascade through dozens of systems, impact customer experiences in real time, and train AI on flawed inputs. In other words, problems scale.

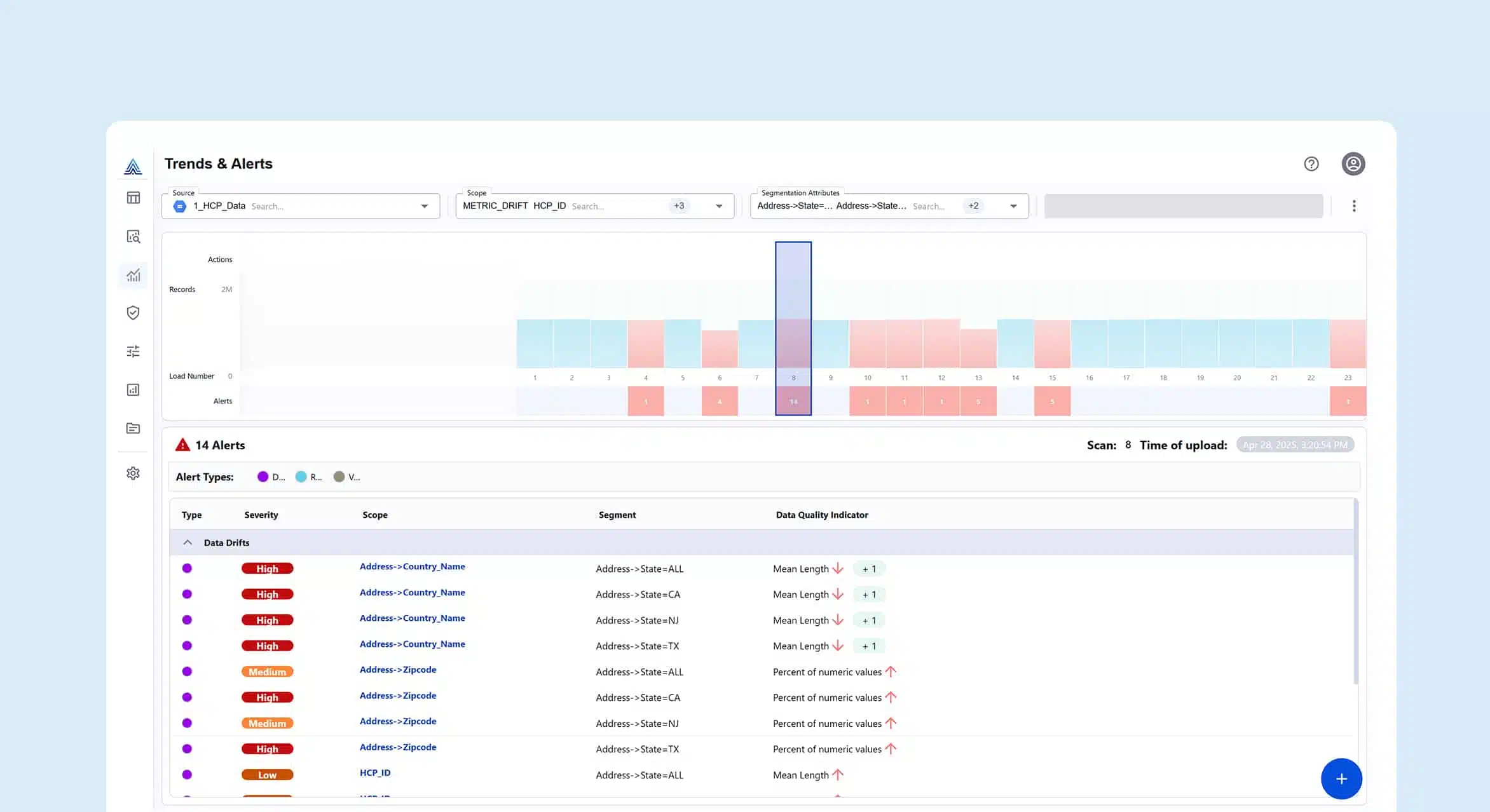

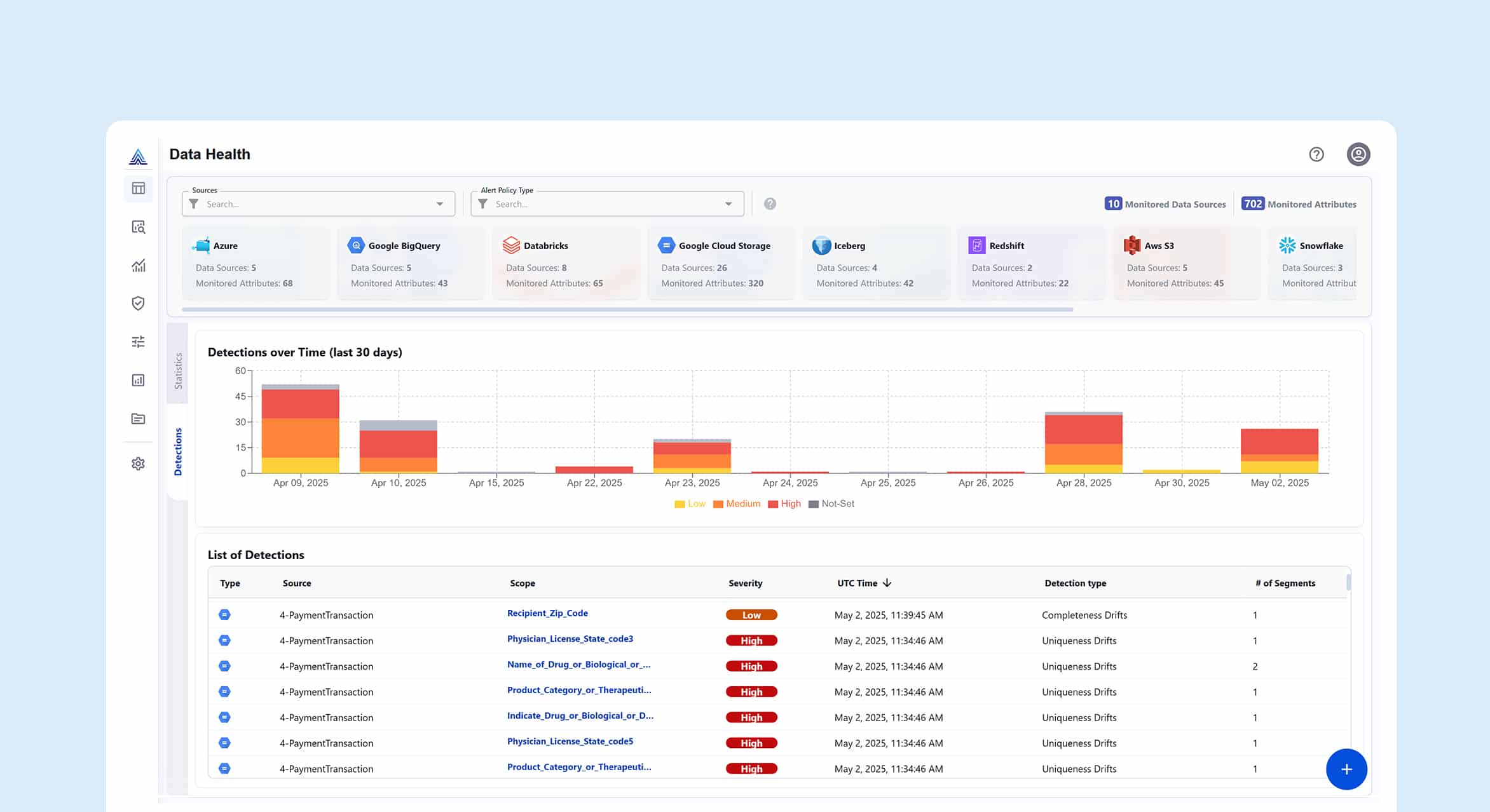

This is why data observability is essential. Only monitoring infrastructure is not enough anymore. Organizations need end-to-end visibility into data flows, lineage, quality metrics, and anomalies. And they need to mitigate issues before they move downstream and cause disruption. At Actian, we’ve seen how data observability capabilities, including real-time alerts, custom metrics, and native integration with tools like JIRA, resonate strongly with customers. Companies must move beyond fixing problems after the fact to proactively identifying and addressing issues early in the data lifecycle.

3. Metadata is the Unsung Hero of Data Intelligence

While AI and observability steal the spotlight at conferences, metadata is quietly becoming a top differentiator. Surprisingly, metadata management wasn’t front and center at most events I attended, but it should be. Metadata provides the context, traceability, and searchability that data teams need to scale responsibly and deliver trusted data products.

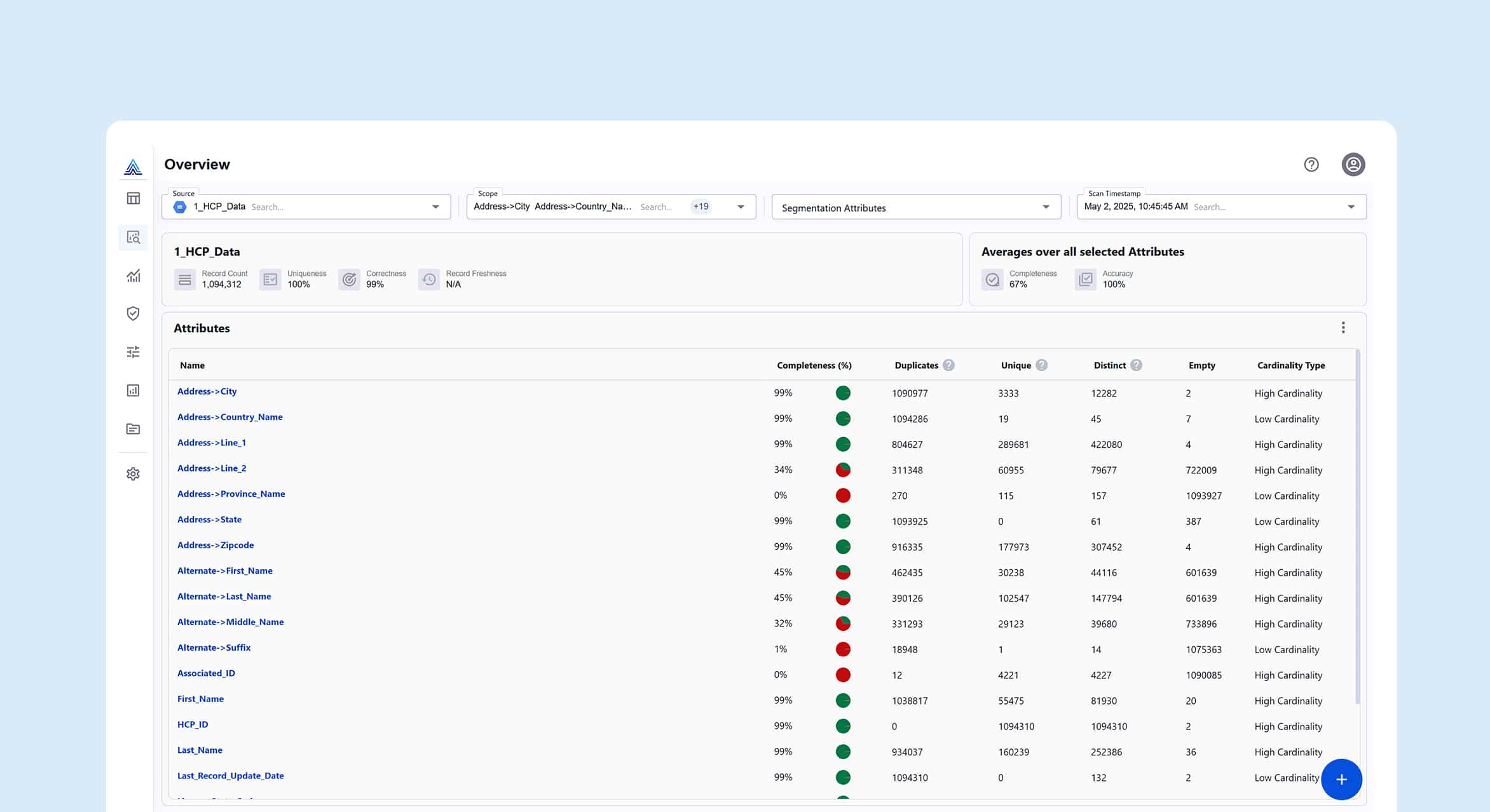

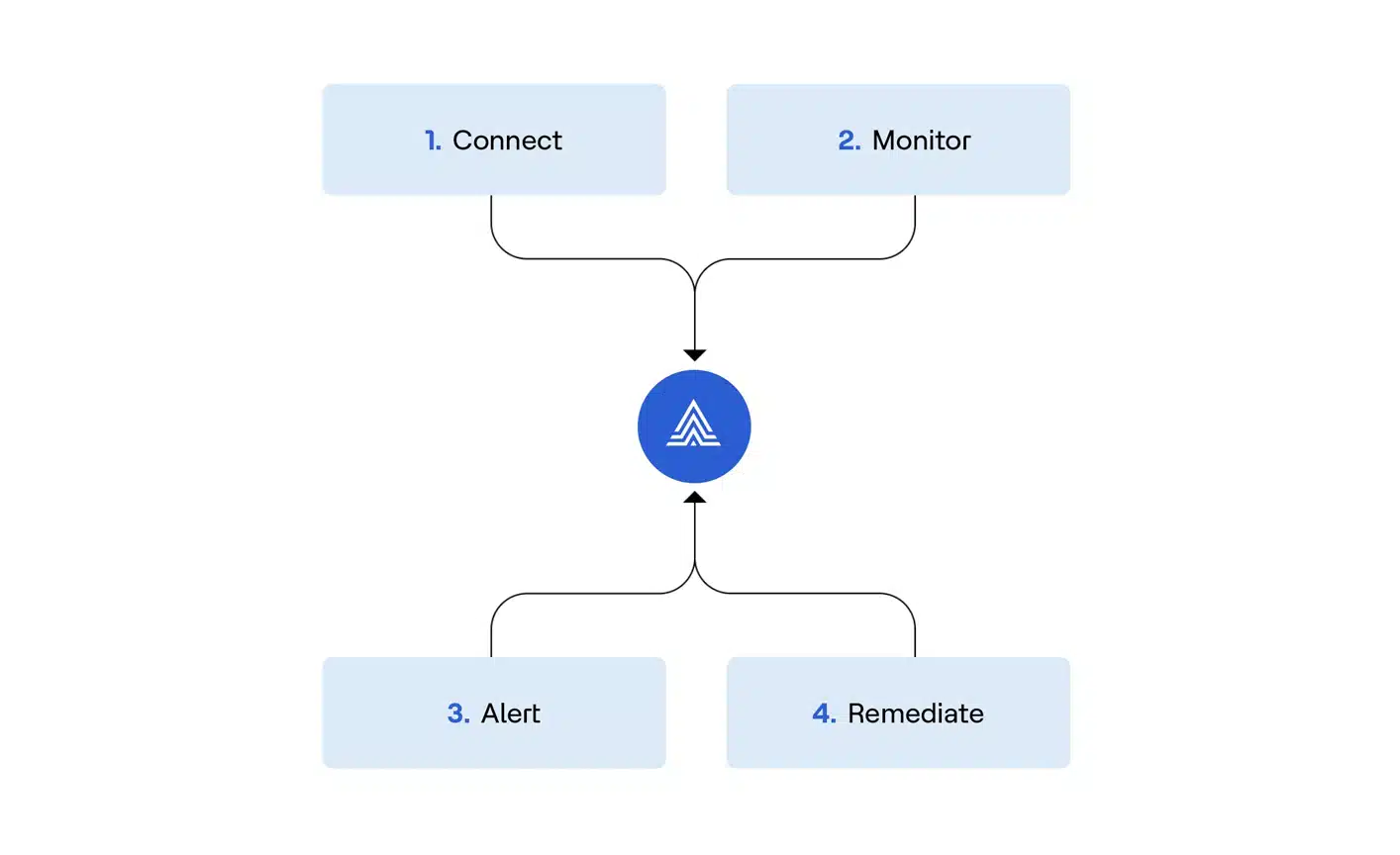

For example, with the Actian Data Intelligence Platform, all metadata is managed by a federated knowledge graph. The platform enables smart data usage through integrated metadata, governance, and AI automation. Whether a business user is searching for a data product or a data steward is managing lineage and access, metadata makes the data ecosystem more intelligent and easier to use.

4. Data Intelligence is Catching On

I’ve seen a noticeable uptick in how vendors talk about “data intelligence.” It’s becoming increasingly discussed as part of modern platforms, and for good reason. Data intelligence brings together cataloging, governance, and collaboration in a way that’s advantageous for both IT and business teams.

While we’re seeing other vendors enter this space, I believe Actian’s competitive edge lies in our simplicity and scalability. We provide intuitive tools for data exploration, flexible catalog models, and ready-to-use data products backed by data contracts. These aren’t just features. They’re business enablers that allow users at all skill levels to quickly and easily access the data they need.

5. The Culture Around Data Access is Changing

One of the most interesting shifts I’ve noticed is a tradeoff, if not friction, between data democratization and data protection. Chief data officers and data stewards want to empower teams with self-service analytics, but they also need to ensure sensitive information is protected.

The new mindset isn’t “open all data to everyone” or “lock it all down” but instead a strategic approach that delivers smart access control. For example, a marketer doesn’t need access to customer phone numbers, while a sales rep might. Enabling granular control over data access based on roles and context, right down to the row and column level, is a top priority.

Data Intelligence is More Than a Trend

Some of the most meaningful insights I gain at events take place through unstructured, one-on-one interactions. Whether it’s chatting over dinner with customers or striking up a conversation with a stranger before a breakout session, these moments help us understand what really matters to businesses.

While AI may be the main topic right now, it’s clear that data intelligence will determine how well enterprises actually deliver on AI’s promise. That means prioritizing data quality, trust, observability, access, and governance, all built on a foundation of rich metadata. At the end of the day, building a smart, AI-ready enterprise starts with something deceptively simple—better data.

When I’m at events, I encourage attendees who visit with Actian to experience a product tour. That’s because once data leaders see what trusted, intelligent data can do, it changes the way they think about data, use cases, and outcomes.

Subscribe to the Actian Blog

Subscribe to Actian’s blog to get data insights delivered right to you.

- Stay in the know – Get the latest in data analytics pushed directly to your inbox.

- Never miss a post – You’ll receive automatic email updates to let you know when new posts are live.

- It’s all up to you – Change your delivery preferences to suit your needs.