Base de datos vectorial para dispositivos portátiles con IA

Un rendimiento que se mantiene en la producción

QPS con 10 millones de vectores

Diseñado para aplicaciones de IA en tiempo real en las que no se puede esperar.

retirada a gran escala

La precisión no se ve afectada a medida que aumenta el tamaño del conjunto de datos.

milisegundos

Latencia de 99 ms para garantizar un rendimiento constante desde la fase de prototipo hasta la producción.

Implementación segura de la IA en cualquier lugar

Descubre cómo Actian VectorAI DB te ayuda a crear y ejecutar aplicaciones de IA en cualquier lugar, sin depender de la nube. Esta base de datos vectorial portátil, diseñada para un uso local, ofrece una recuperación rápida y predecible, al tiempo que mantiene tus datos y tu infraestructura totalmente bajo tu control.

La base de datos vectorial en la nube no se diseñó para tu caso concreto

La latencia de la red impide el funcionamiento de las aplicaciones en tiempo real

Las idas y vueltas a la nube añaden entre 200 y 400 ms a cada consulta que se ejecuta. No es posible crear aplicaciones con una latencia inferior a 100 ms cuando la mayor parte de la latencia proviene de la base de datos.

La infraestructura de terceros impide las implementaciones reguladas

La HIPAA y el RGPD exigen que tus datos permanezcan bajo tu control. Los servicios en la nube implican un tratamiento por parte de terceros que no cumple con tus requisitos de cumplimiento normativo.

La arquitectura exclusivamente en la nube impide la implementación de escenarios completos

Tus dispositivos periféricos, entornos sin conexión y sistemas integrados no pueden contar con una conexión a Internet fiable. Las bases de datos en la nube dejan sin resolver categorías enteras de tus aplicaciones de IA.

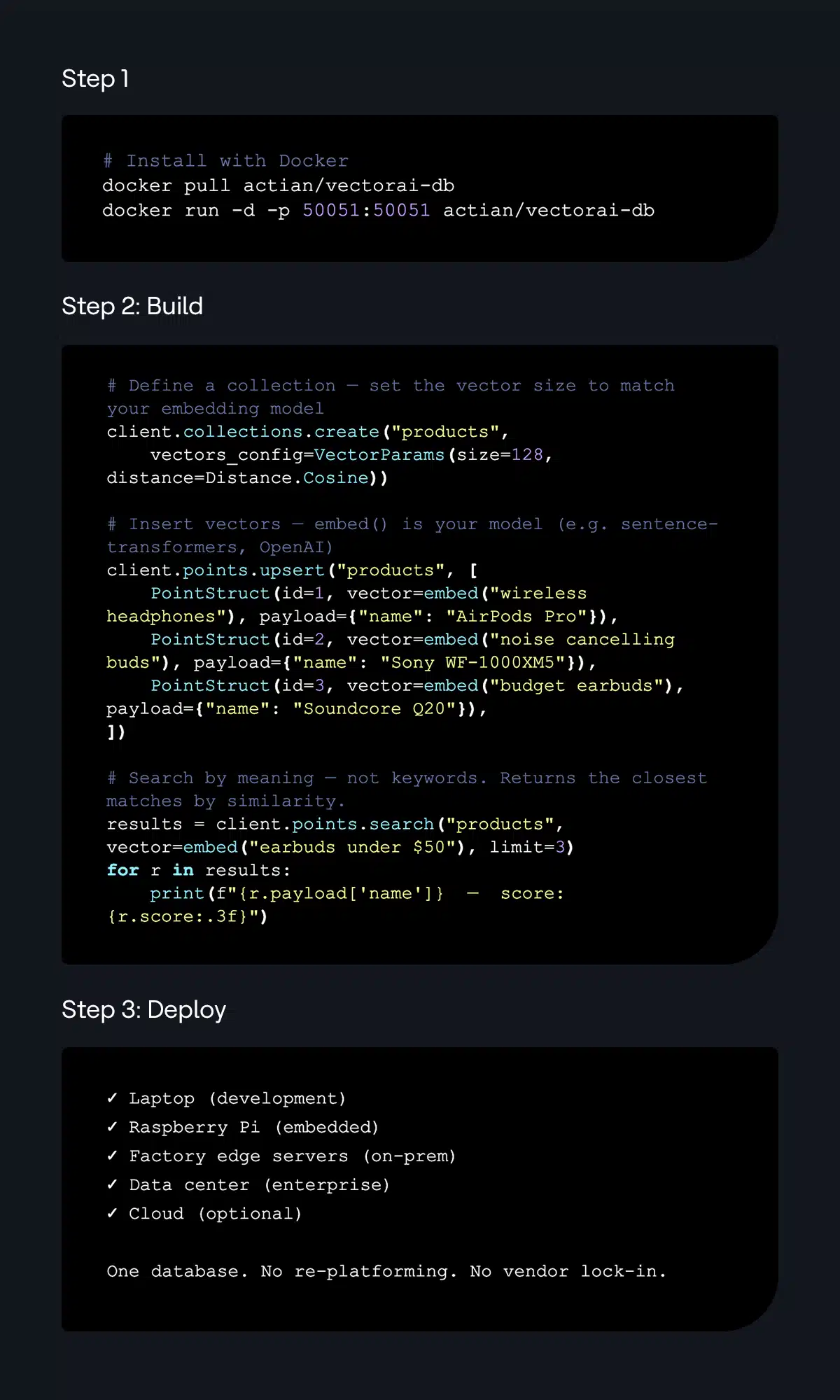

De la instalación a la puesta en marcha en cuestión de minutos

Explora los recursos y crea aplicaciones utilizando el lenguaje de programación que prefieras.

Una estructura de precios que se adapta a tu forma de desarrollar la IA

Empieza gratis, prueba en tu entorno local y pasa del prototipo a la producción sin cambiar tu arquitectura. VectorAI DB ofrece paquetes flexibles para desarrolladores que crean flujos de trabajo RAG, agentes y aplicaciones de IA en el borde en entornos locales, on-premise y en la nube.

Diseñado para desarrolladores en el borde

VectorAI DB de Actian posibilita una IA portátil al ofrecer:

Preguntas frecuentes

Actian VectorAI DB es una base de datos vectorial portátil y orientada al entorno local, diseñada para sistemas de IA que operan más allá de la nube. Permite a los desarrolladores realizar búsquedas semánticas e híbridas cerca de sus datos, lo que garantiza una recuperación predecible y de baja latencia en entornos periféricos, locales, híbridos y en la nube.

La mayoría de las bases de datos vectoriales están pensadas para implementaciones nativas en la nube, mientras que VectorAI DB está diseñada para funcionar de manera uniforme en entornos periféricos, locales, híbridos y en la nube. Ofrece una recuperación portátil que da prioridad al entorno local con un rendimiento predecible, incluyendo hasta 22 veces más QPS a gran escala en hardware idéntico autoalojado.

VectorAI DB es compatible con los métodos modernos de indexación de «vecino más cercano aproximado» (ANN), incluido HNSW, lo que permite realizar búsquedas a gran escala con baja latencia y alta precisión.

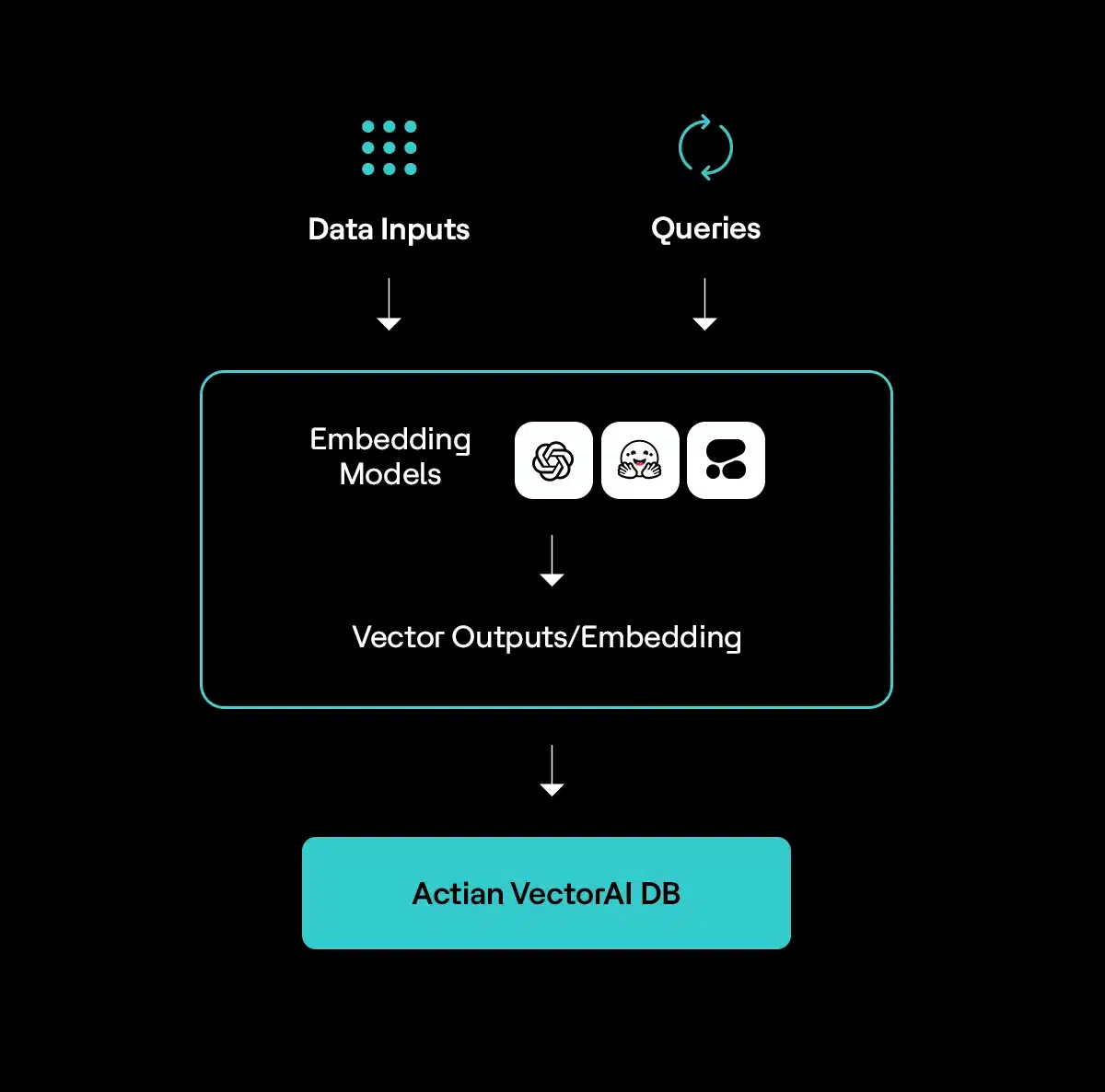

VectorAI DB es independiente del modelo y funciona con representaciones generadas por cualquier proveedor o marco de trabajo. Esto incluye a OpenAI, Anthropic, Cohere, modelos de código abierto como Hugging Face y modelos personalizados o ajustados.

Sí. VectorAI DB puede crear y almacenar representaciones vectoriales a partir de fuentes de datos multimodales, como texto, imágenes, audio y vídeo.

Not Sure Where to Start?

Talk with our team about how organizations are using VectorAI DB to build AI agents, reduce retrieval latency, keep sensitive data local, and deploy AI in environments where cloud databases don’t work. We can help you evaluate architectures, deployment models, and real-world AI use cases.

Book a Personalized Demo

(i.e. sales@..., support@...)