Presentamos Actian VectorAI DB: IA allí donde residen tus datos

Summary

- La inteligencia artificial está pasando de la fase experimental a la de producción, lo que exige una infraestructura de datos más sólida.

- Las bases de datos vectoriales permiten la búsqueda semántica para la IA, RAG y los flujos de trabajo de agentes.

- Las arquitecturas centralizadas no funcionan en entornos regulados, periféricos y distribuidos.

- Actian VectorAI DB permite una recuperación rápida y portátil tanto en la nube como en entornos locales y en el perímetro.

- Los futuros sistemas de IA se ejecutarán allí donde se encuentren los datos, y no en plataformas centralizadas.

La IA está entrando en una nueva fase. La era de los prototipos está llegando a su fin y comienza la era de los sistemas de producción. En los últimos dos años, las organizaciones han experimentado con la IA generativa, han creado proyectos piloto y han explorado las posibilidades de los grandes modelos de lenguaje. Ahora que la atención se está desplazando hacia la producción, los equipos se plantean una pregunta mucho más compleja: ¿qué infraestructura se necesita para que la IA sea fiable a escala empresarial? La respuesta recae, cada vez más, en la capa de datos.

Los sistemas modernos de IA dependen de un acceso rápido e inteligente a los datos. La búsqueda semántica, la generación aumentada por recuperación (RAG) y los agentes de IA se basan en la capacidad de recuperar rápidamente información relevante y fundamentar sus respuestas en el conocimiento de la empresa. Cuando la recuperación funciona bien, el sistema parece inteligente. Cuando no es así, se produce el caos.

Al mismo tiempo, los entornos en los que se ejecutan los sistemas de IA son cada vez más distribuidos. Los datos empresariales rara vez se encuentran en un único lugar. Se extienden por plataformas en la nube, sistemas operativos, centros de datos y entornos periféricos, donde los datos se generan cerca de las máquinas y las aplicaciones. Los requisitos normativos, las restricciones de privacidad y las políticas de gobernanza interna suelen determinar dónde deben residir esos datos. En otras palabras, la próxima generación de infraestructura de IA no estará centralizada. Estará distribuida, regulada e implementada allí donde se encuentren los datos.

Hoy presentamos la Actian VectorAI DB, una base de datos vectorial diseñada para dar soporte a esta nueva generación de sistemas de IA al permitir una rápida recuperación semántica en entornos de nube, locales y periféricos. El lanzamiento coincide con la publicación de mi nuevo informe de O’Reilly, «Bases de datos vectoriales para la IA empresarial», que analiza por qué la recuperación vectorial se está convirtiendo en una capacidad fundamental para la IA empresarial y cómo las arquitecturas distribuidas están redefiniendo el diseño de las plataformas de datos de IA.

Summary

- Los sistemas de IA dependen cada vez más de la búsqueda vectorial para obtener contexto en los flujos de trabajo de RAG, los asistentes de IA y los flujos de trabajo basados en agentes.

- Muchas bases de datos vectoriales parten de la base de que se utilizan arquitecturas centralizadas en la nube, lo que no se adapta a entornos regulados o periféricos.

- Actian VectorAI DB permite una recuperación semántica de alto rendimiento con un diseño ligero y un bajo consumo de memoria en implementaciones en el borde, locales, híbridas y en la nube.

- El objetivo es sencillo: acercar la IA a los datos de los que depende, en lugar de obligar a los datos a pasar por sistemas centralizados.

Por qué las bases de datos vectoriales se están convirtiendo en un elemento esencial para la IA empresarial

Desde el lanzamiento de ChatGPT en 2023, las organizaciones han experimentado un auge sin precedentes en la experimentación con la IA generativa. Sin embargo, a medida que surgen nuevos casos de uso, también lo hacen nuevos riesgos. Los incidentes de seguridad de gran repercusión han puesto de manifiesto los retos que plantea la gestión de datos confidenciales en entornos basados en la IA, mientras que las organizaciones reconocen cada vez más que los sistemas de IA deben recuperar e interactuar con el conocimiento corporativo de una manera controlada y fiable.

Al mismo tiempo, la presión normativa se está intensificando. Entre 2023 y 2024 se promulgaron más de 170 nuevas leyes de protección de datos¹ en todo el mundo, y la Ley de IA de la UE marca un giro hacia una supervisión más estricta del desarrollo y el uso de los sistemas de IA. A medida que la IA pasa de la fase experimental a la de producción, las empresas necesitan un mayor control sobre cómo los sistemas de IA acceden a los datos, los recuperan y los utilizan.

Uno de los cambios más importantes en las arquitecturas modernas de IA es la forma en que estos sistemas obtienen el conocimiento.

Los modelos de aprendizaje automático convierten información como documentos, imágenes, conversaciones y otros contenidos no estructurados en vectores numéricos conocidos como «embeddings». Estos «embeddings» captan el significado semántico en lugar de la estructura literal. Una base de datos vectorial indexa esos «embeddings» y permite a los sistemas buscar vectores similares, de modo que las aplicaciones puedan recuperar información que se ajuste a la intención de la consulta, en lugar de a una palabra clave.

Por qué fallan las arquitecturas centralizadas

La mayoría de las bases de datos vectoriales parten de la premisa de una infraestructura centralizada en la nube. En la práctica, muchos sistemas de IA empresariales funcionan en entornos en los que esas premisas no se cumplen.

Los sistemas de fabricación analizan los datos de los sensores directamente en las líneas de producción. Los sistemas sanitarios tratan información confidencial de los pacientes en entornos regulados. Las entidades financieras operan bajo estrictas normas de residencia de datos que limitan los lugares a los que pueden trasladarse los datos. Los sistemas gubernamentales suelen funcionar en redes desconectadas o restringidas.

En estos casos, trasladar grandes volúmenes de datos a una infraestructura centralizada suele ser poco práctico o incluso está prohibido. Por ello, los sistemas de IA deben ejecutarse cerca de los datos de los que dependen.

Presentamos Actian VectorAI DB

Actian VectorAI DB está diseñada específicamente para aquellos entornos en los que los sistemas de IA deben ejecutarse cerca de los datos de los que dependen. Ofrece almacenamiento vectorial nativo y búsqueda de similitudes de alto rendimiento mediante técnicas como la indexación por vecindad más cercana aproximada (ANN). Algoritmos como HNSW permiten una recuperación eficiente en grandes colecciones de representaciones, al tiempo que equilibran la velocidad, la precisión y el uso de recursos. Y lo que es más importante, Actian VectorAI DB está diseñada para ejecutarse allí donde las aplicaciones de IA lo necesiten. El sistema admite la implementación en sistemas integrados, entornos periféricos, infraestructura local, arquitecturas híbridas y plataformas en la nube.

Los desarrolladores pueden crear aplicaciones de IA una sola vez e implementarlas en distintos entornos utilizando la misma arquitectura y las mismas API. Esto elimina la necesidad de rediseñar la infraestructura de recuperación a medida que los sistemas pasan de la fase de prototipo a la de producción. El resultado es una base de datos vectorial portátil que permite a las organizaciones acercar la IA a sus datos, en lugar de tener que transferir los datos a plataformas centralizadas.

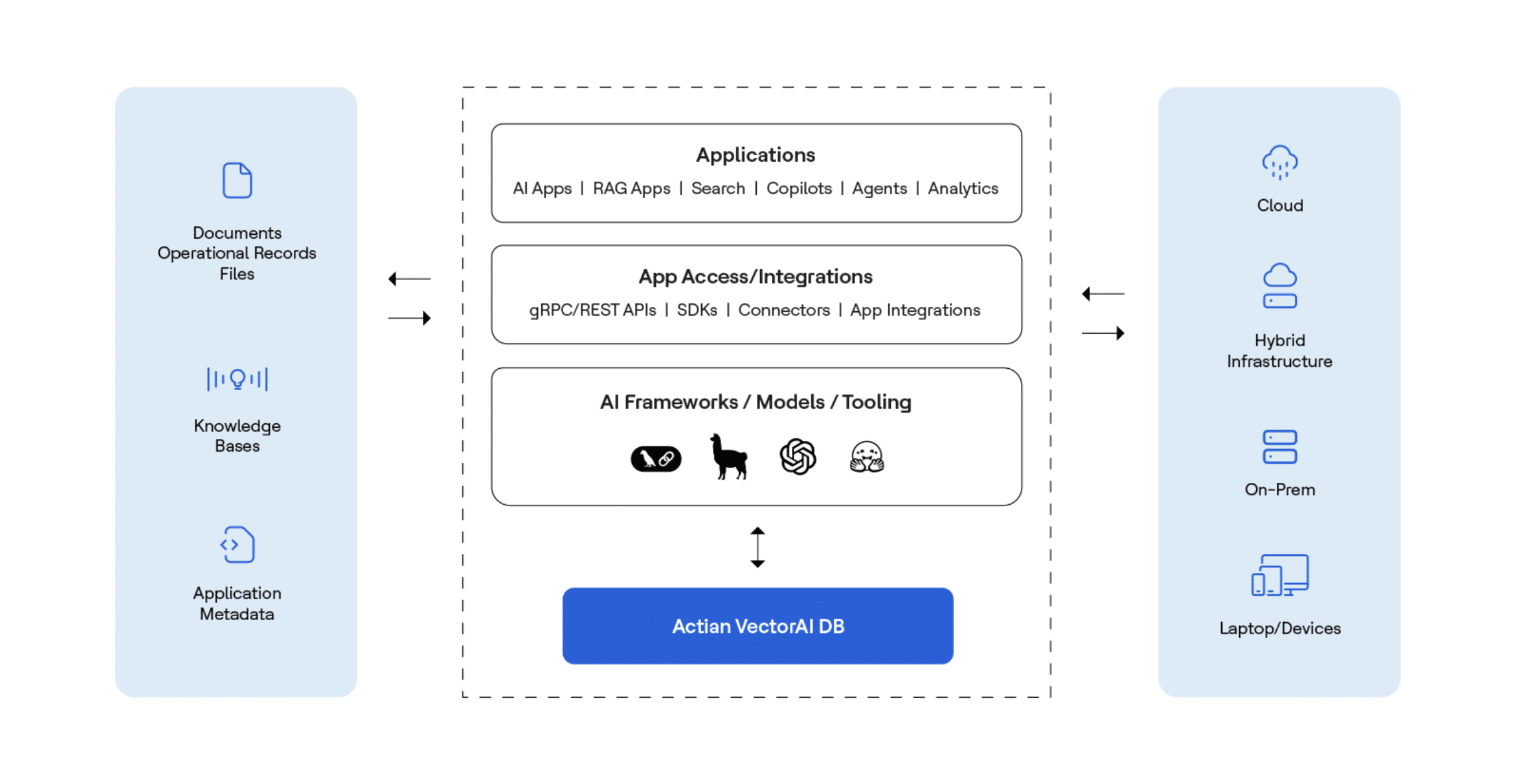

Para comprender cómo encaja la recuperación de vectores en los sistemas modernos de IA, resulta útil analizar la arquitectura de una aplicación típica de IA. Las fuentes de datos empresariales, como documentos, registros operativos, bases de conocimiento y metadatos de aplicaciones, se transforman en representaciones vectoriales y se indexan para la búsqueda por similitud. A continuación, los marcos y modelos de IA interactúan con esta capa de recuperación a través de API, conectores e integraciones de aplicaciones, lo que permite que aplicaciones como los motores de búsqueda, los sistemas RAG, los copilotos y los agentes de IA recuperen contexto relevante en tiempo real.

El siguiente diagrama ilustra cómo Actian VectorAI DB se sitúa en el centro de esta arquitectura, lo que permite la recuperación semántica y, al mismo tiempo, facilita la implementación en la nube, en infraestructuras híbridas, en entornos locales y en dispositivos periféricos.

Qué ofrece Actian VectorAI DB

La recuperación semántica rápida abre las puertas a una amplia gama de aplicaciones de IA. Los sistemas de búsqueda empresarial pueden extraer información de grandes bases de datos basándose en el significado, en lugar de en palabras clave. Los flujos de trabajo RAG permiten anclar los grandes modelos de lenguaje a los datos propios de la empresa. Los asistentes y agentes de IA pueden recuperar la información contextual necesaria para respaldar el razonamiento y la toma de decisiones.

La similitud vectorial también puede servir de apoyo para la detección de anomalías, los sistemas de recomendación y la búsqueda multimodal en textos, imágenes y otros tipos de datos no estructurados. A medida que estas aplicaciones ganan en autonomía, la capacidad de implementar una infraestructura vectorial en entornos distribuidos cobra cada vez más importancia.

El futuro de la infraestructura de la IA

Durante muchos años, la tendencia arquitectónica predominante en las plataformas de datos fue la centralización. Los datos se recopilaban y se consolidaban en grandes plataformas en las que se podían ejecutar análisis y aprendizaje automático. La inteligencia artificial está impulsando ahora al sector hacia un modelo diferente.

La inteligencia está cada vez más presente allí donde se generan datos, ya sea en los sistemas empresariales, en entornos periféricos o en aplicaciones distribuidas. Las bases de datos vectoriales se están convirtiendo en uno de los pilares fundamentales de esa arquitectura, ya que permiten a los sistemas extraer información basándose en el significado, en lugar de en la estructura.

Actian VectorAI DB está diseñada para ese futuro.

Ofrece una base de datos vectorial portátil que permite la recuperación semántica allá donde se ejecuten aplicaciones de IA, al tiempo que proporciona a las organizaciones control sobre la ubicación de sus datos y la forma en que se procesan. Porque el futuro de la IA no se desarrollará en un solo lugar. Se desarrollará allá donde se encuentren los datos. Y la infraestructura que lo sustenta debe diseñarse teniendo en cuenta esa realidad.

Si estás estudiando cómo la recuperación vectorial puede contribuir al desarrollo de sistemas de IA, te invitamos a que empieces a trabajar con Actian VectorAI DB hoy mismo. Pruébalo gratis y descubre cómo la recuperación semántica de alto rendimiento puede potenciar tus propias aplicaciones de IA.

También puedes unirte a la conversación con otros desarrolladores y profesionales en nuestra comunidad de Actian Developer en Discord, donde los equipos comparten ideas, plantean preguntas y exploran juntos nuevos casos de uso de la IA.

Empieza a desarrollar con Actian VectorAI DB hoy mismo. Pruébalo gratis y descubre cómo funciona la recuperación semántica de alto rendimiento en tus propias aplicaciones de IA.

Únete a la conversación con otros desarrolladores y profesionales en nuestra comunidad de Discord para compartir ideas, hacer preguntas y explorar juntos nuevos casos de uso.

¹Fuente: Graham Greenleaf, «Legislación mundial sobre protección de datos en 2025: 172 países, doce nuevos en 2023/24», Facultad de Derecho de Macquarie, 2 de abril de 2025